The Senate Drew a Line in the Sand. The House Erased It.

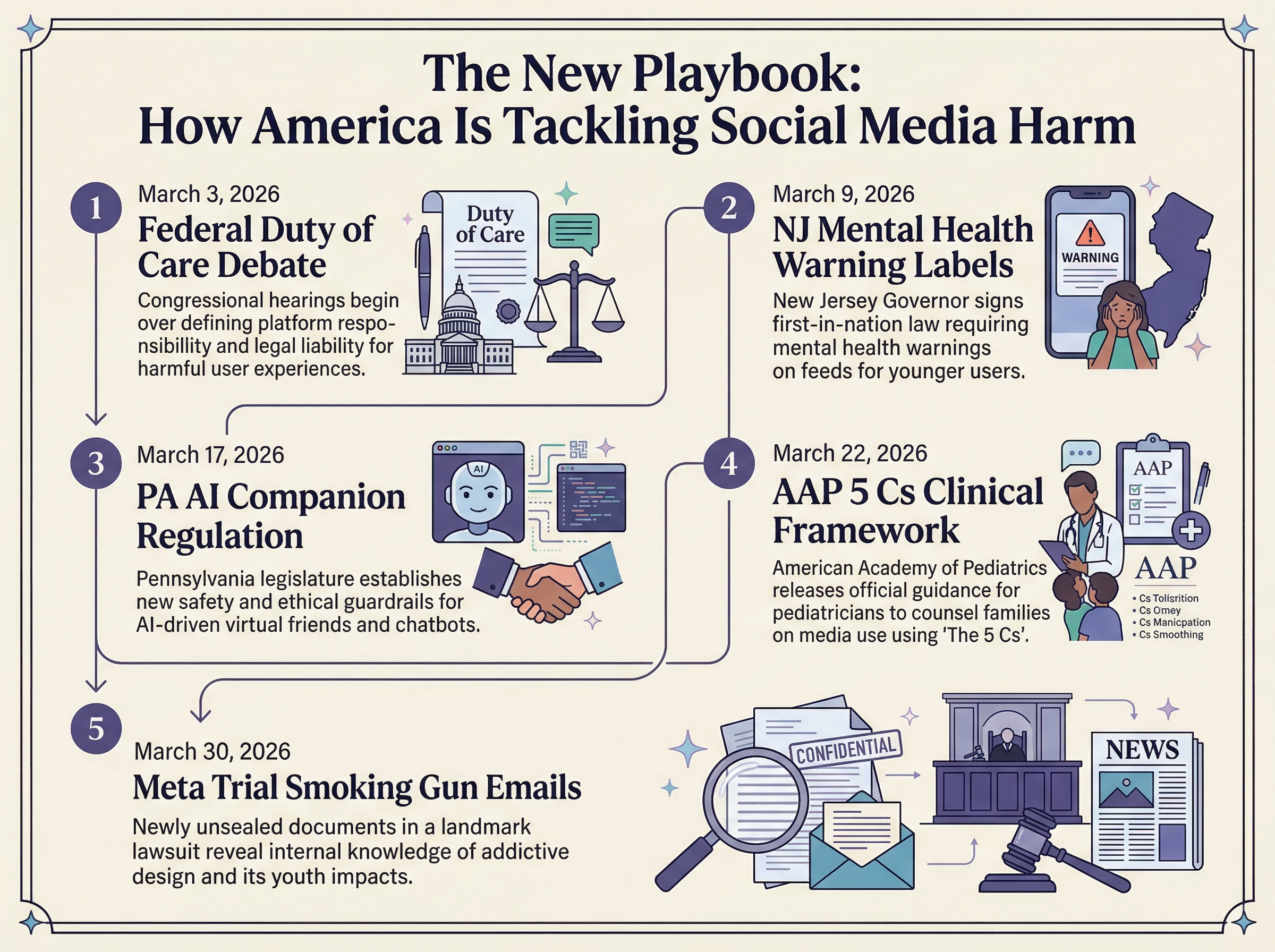

Here's the core question that's been haunting Congress since the Kids Online Safety Act first gained momentum: should social media companies be legally responsible for the foreseeable harm their products cause to young people? Senator Marsha Blackburn thinks yes. The House thinks that sounds like a lawsuit factory.

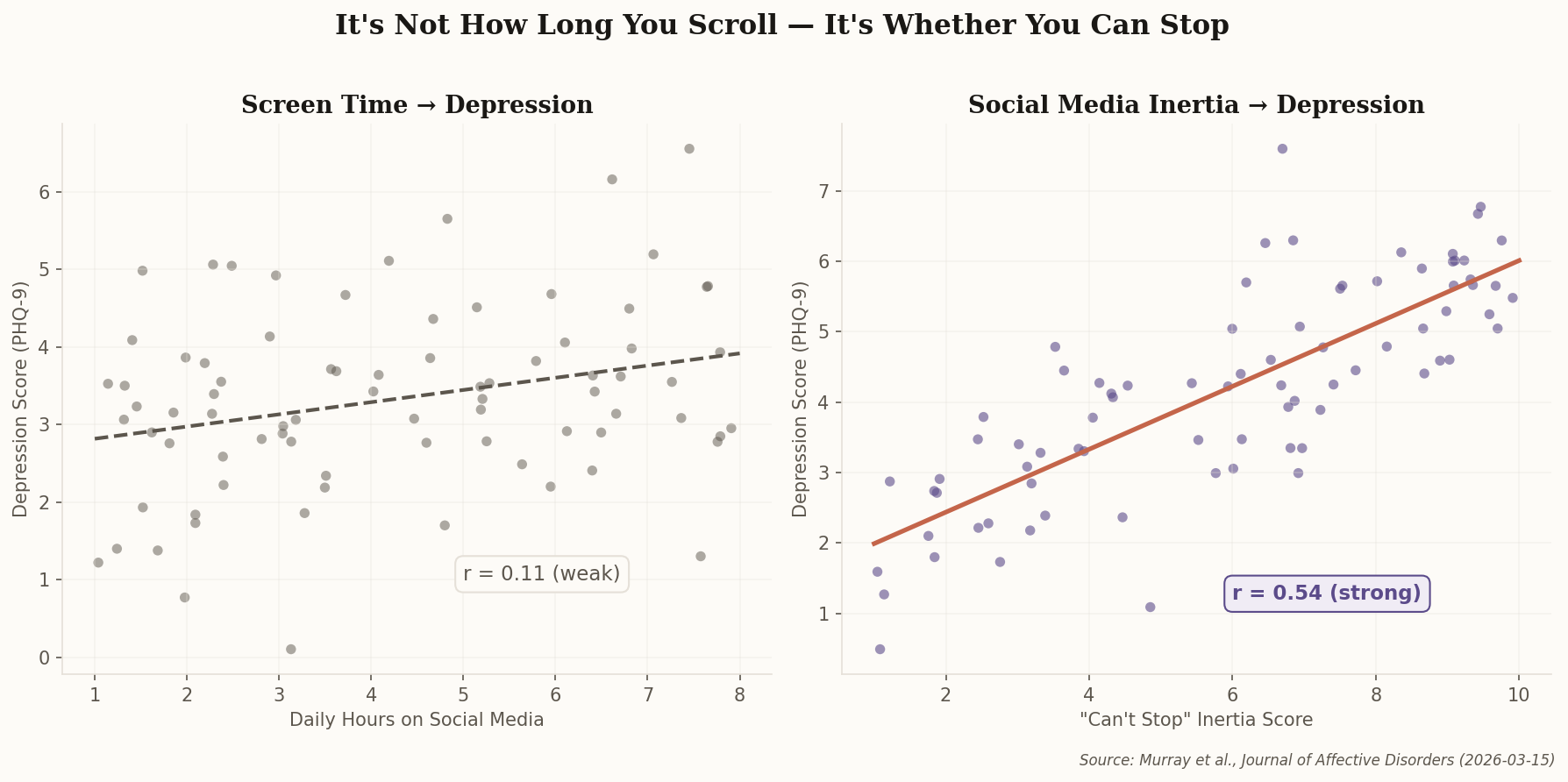

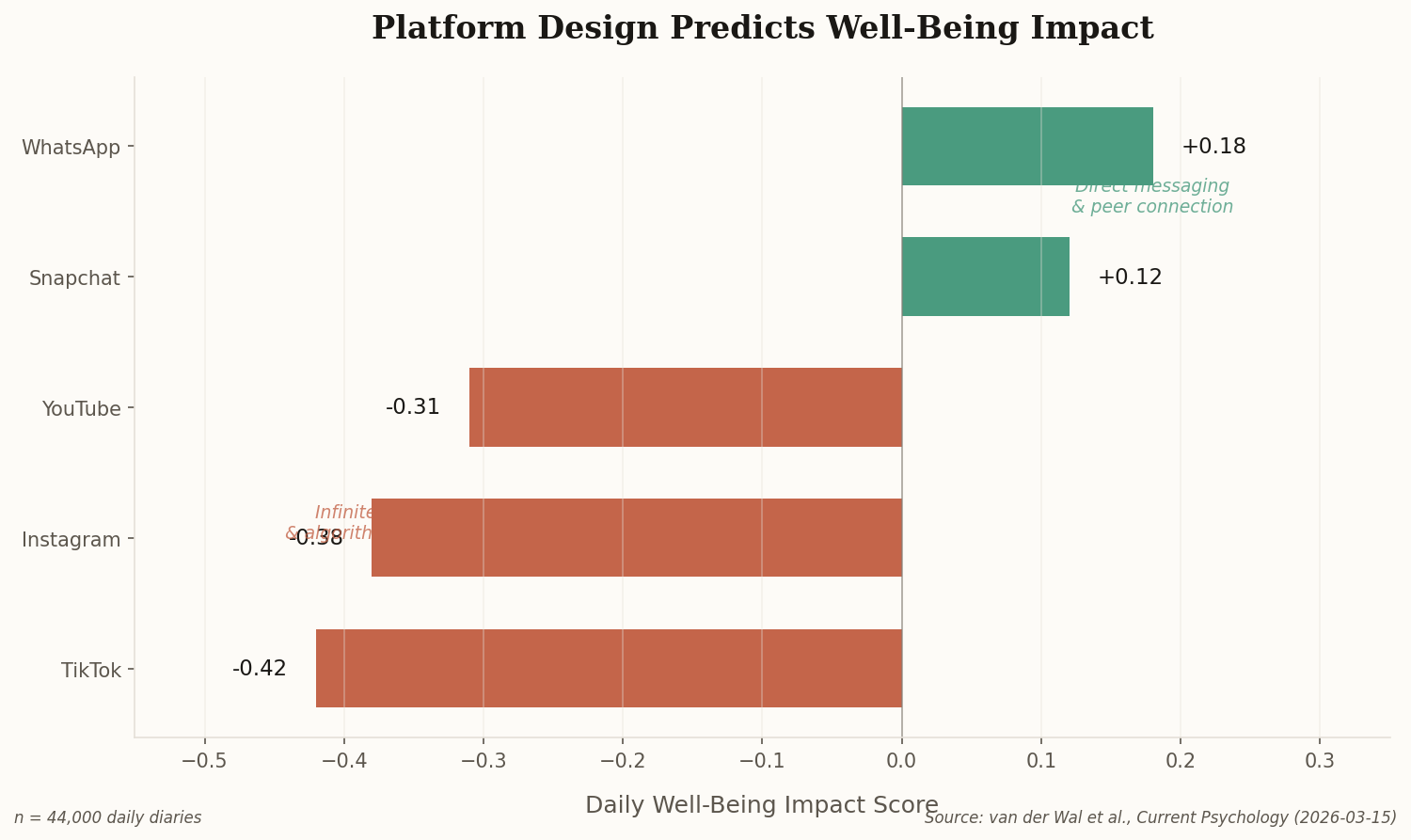

The Senate version of KOSA includes a "duty of care" provision—a legal obligation that treats platform design decisions the way product liability law treats a car manufacturer's braking system. If your algorithmic feed is designed to maximize engagement and you know it correlates with depression in minors, you're on the hook. The House stripped this language entirely, replacing it with what Blackburn publicly called a "diluted" framework that amounts to voluntary best practices.

"Any kids' online safety package sent to President Trump's desk must include a duty of care. We will not support a bill that guts the core accountability mechanism for Big Tech." — Sen. Marsha Blackburn

This isn't a procedural disagreement—it's a philosophical one. The Senate wants social media regulated like a product. The House wants it regulated like a service. That distinction determines whether Meta, TikTok, and Snap face billions in liability or a sternly-worded compliance checklist. Watch this deadlock—it's the single biggest variable in determining whether federal tech regulation actually has teeth in 2026.