OpenAI Blinks: The $8 Admission That Open Source Won

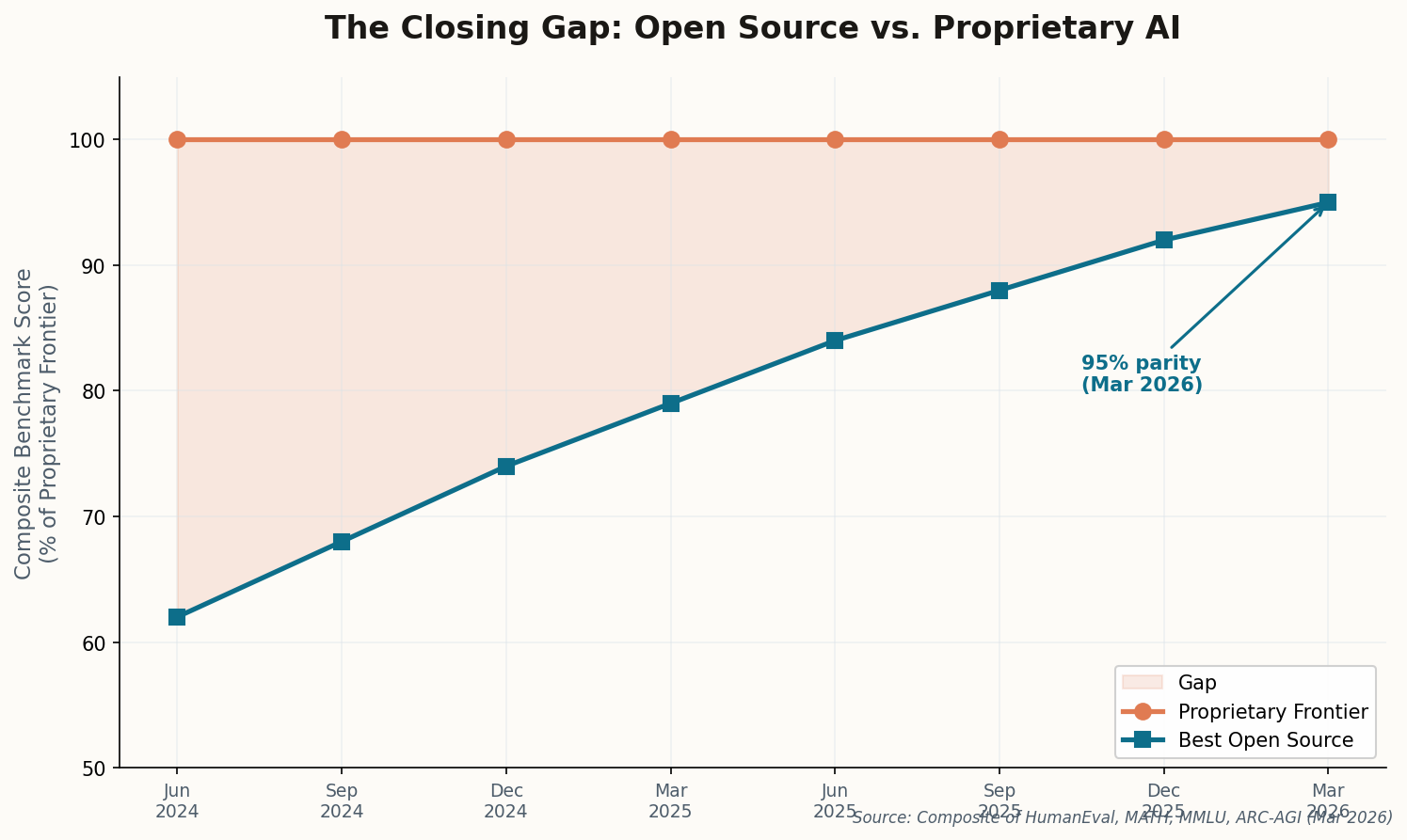

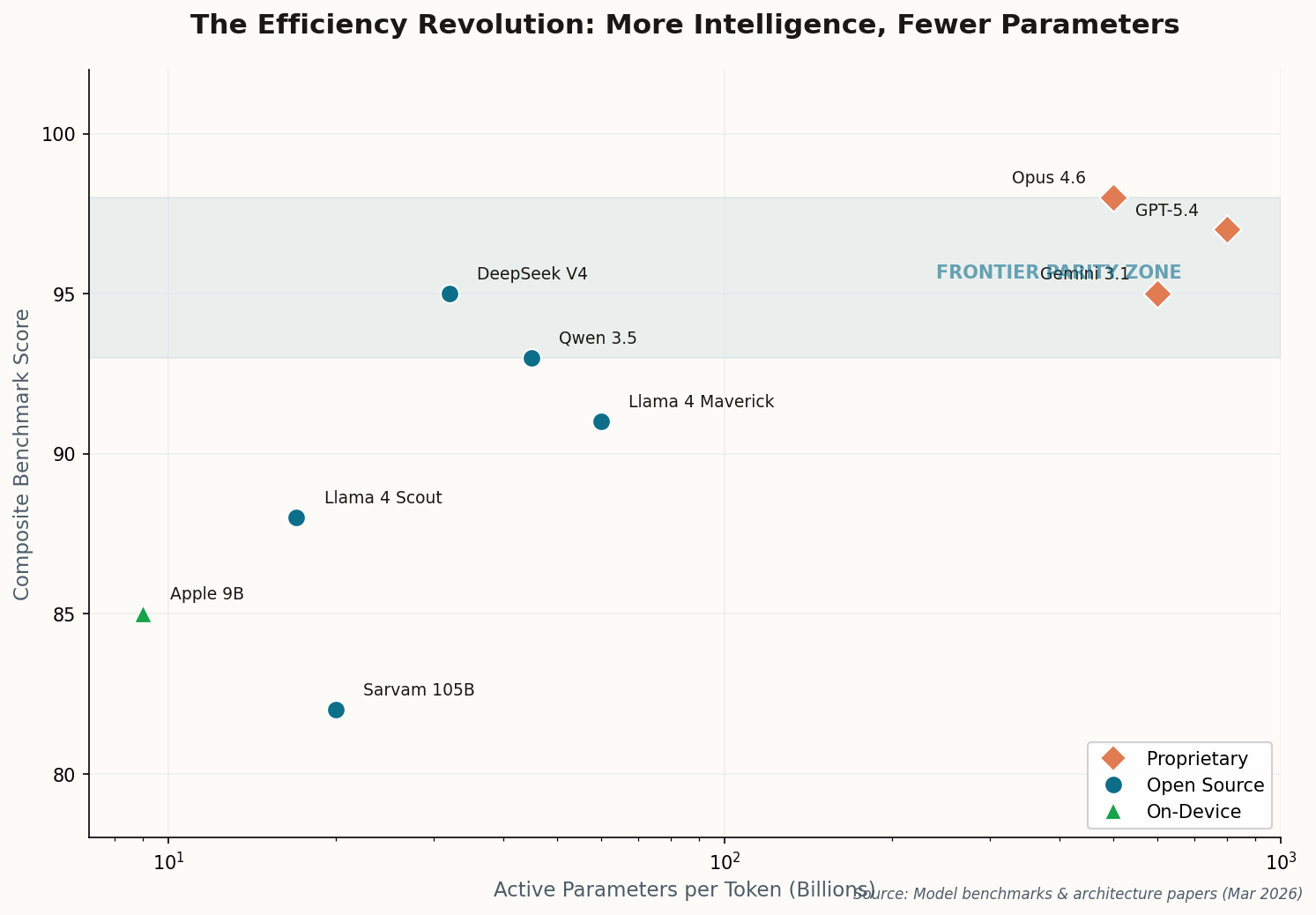

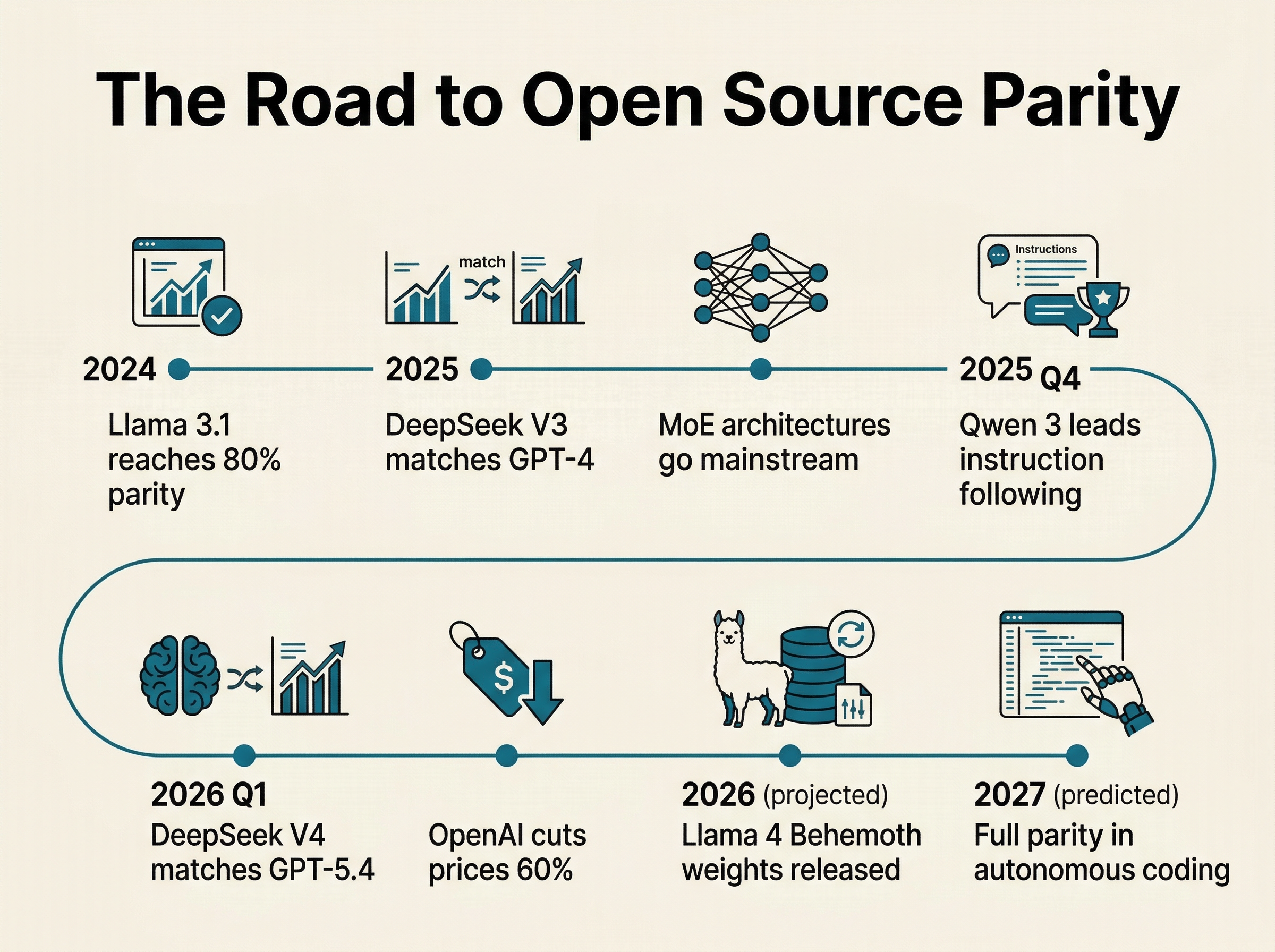

When OpenAI quietly launched an $8/month "Lite" tier offering GPT-5.2 capabilities — roughly half the price of ChatGPT Plus — nobody in the industry pretended this was about generosity. This is a defensive move, pure and simple. The company that once held an unchallenged monopoly on frontier intelligence is now competing on price with models you can download for free.

The Lite tier includes limited access to GPT-5.4's "Thinking" mode, positioning it as a gateway drug to the full subscription. But the subtext screams louder than the marketing: developers are migrating to DeepSeek and Llama in sufficient numbers that OpenAI's growth metrics are under threat. When your product was once priceless and now has a budget tier, the market has spoken.

The real question isn't whether $8 is competitive — it's whether any price is competitive against free. OpenAI is betting that reliability, brand trust, and integrated tooling justify the premium. They're probably right, for now. But "for now" has a short half-life in this market.