If the Math Won't Compile, Grok Won't Say It

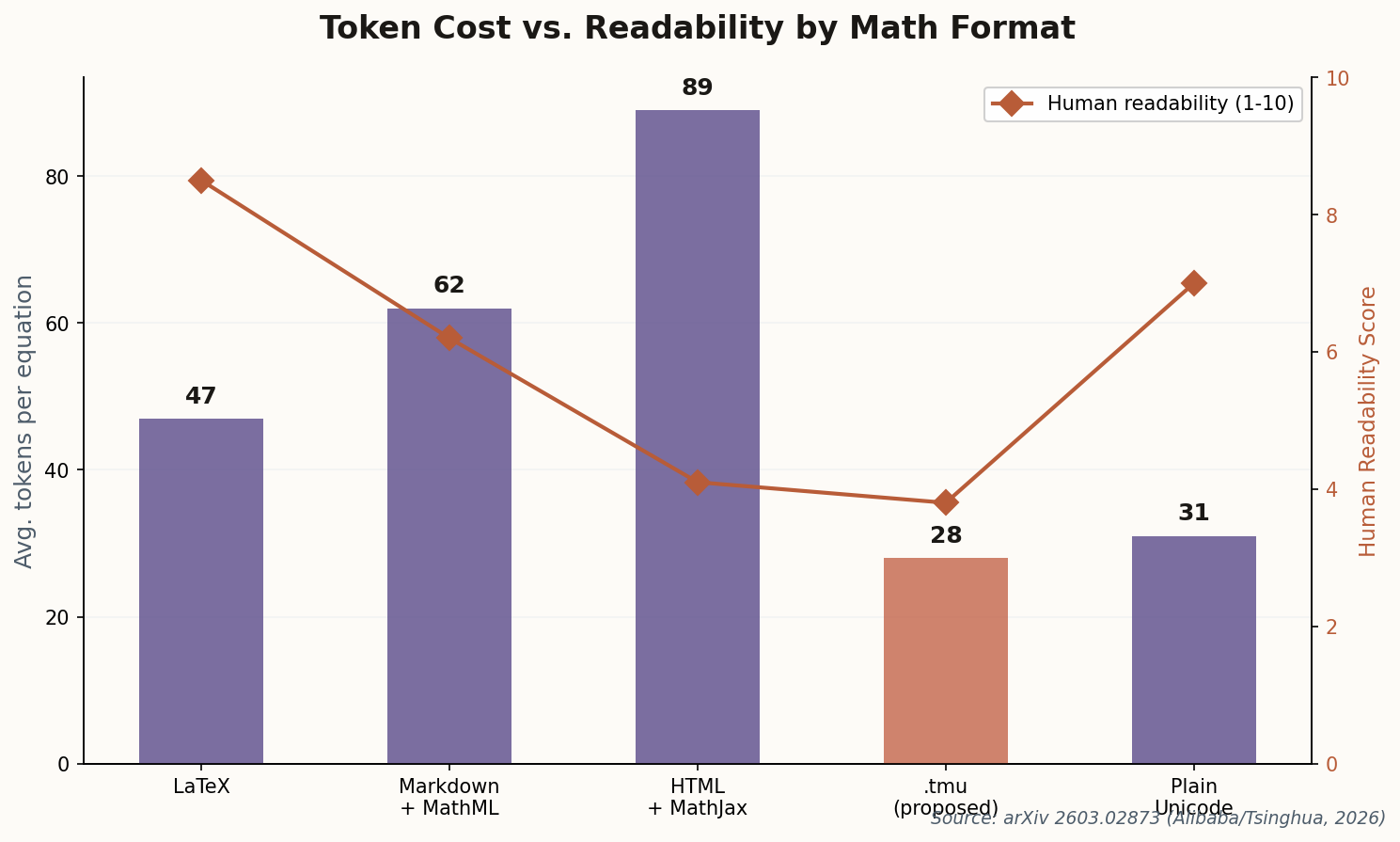

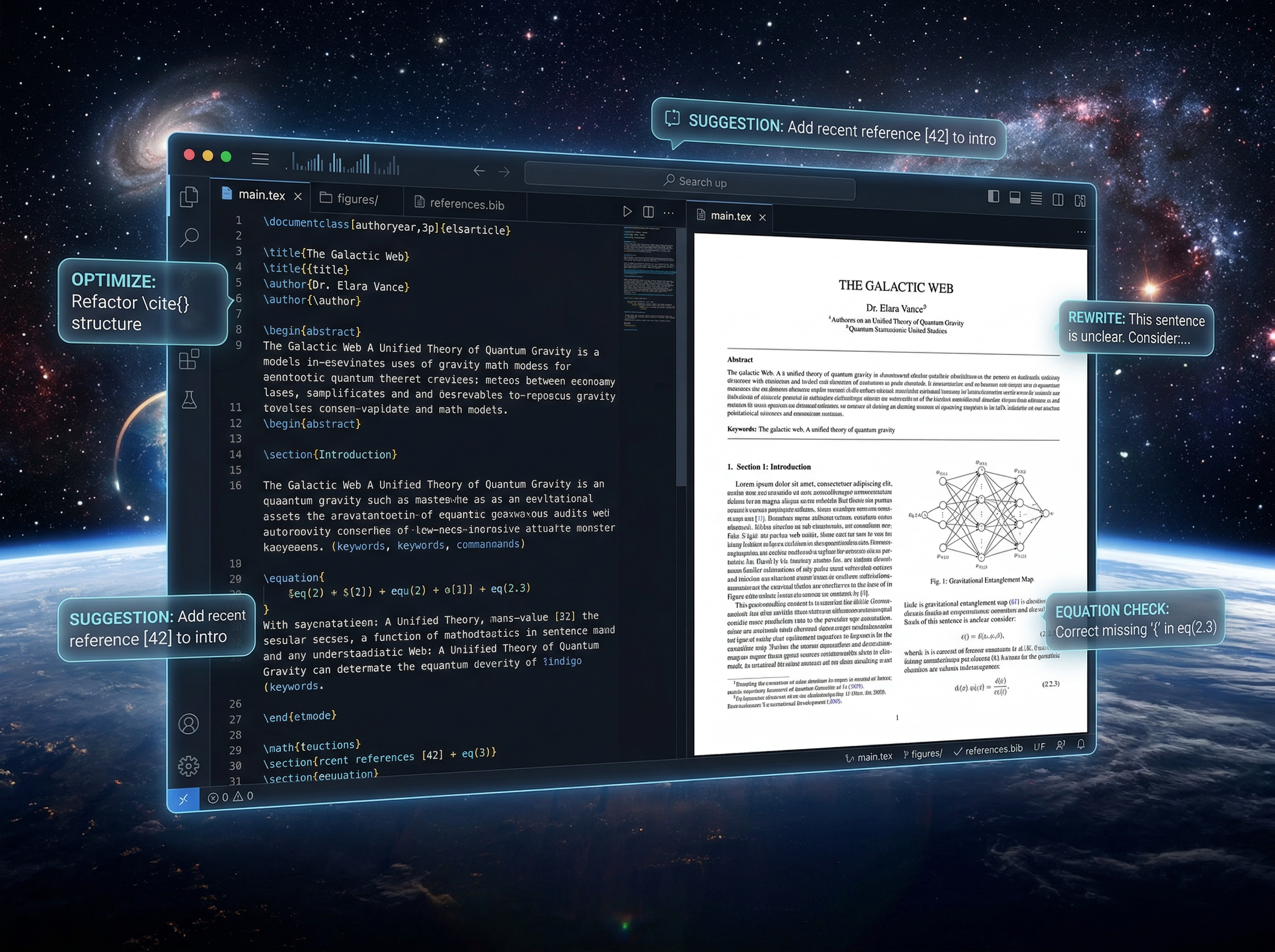

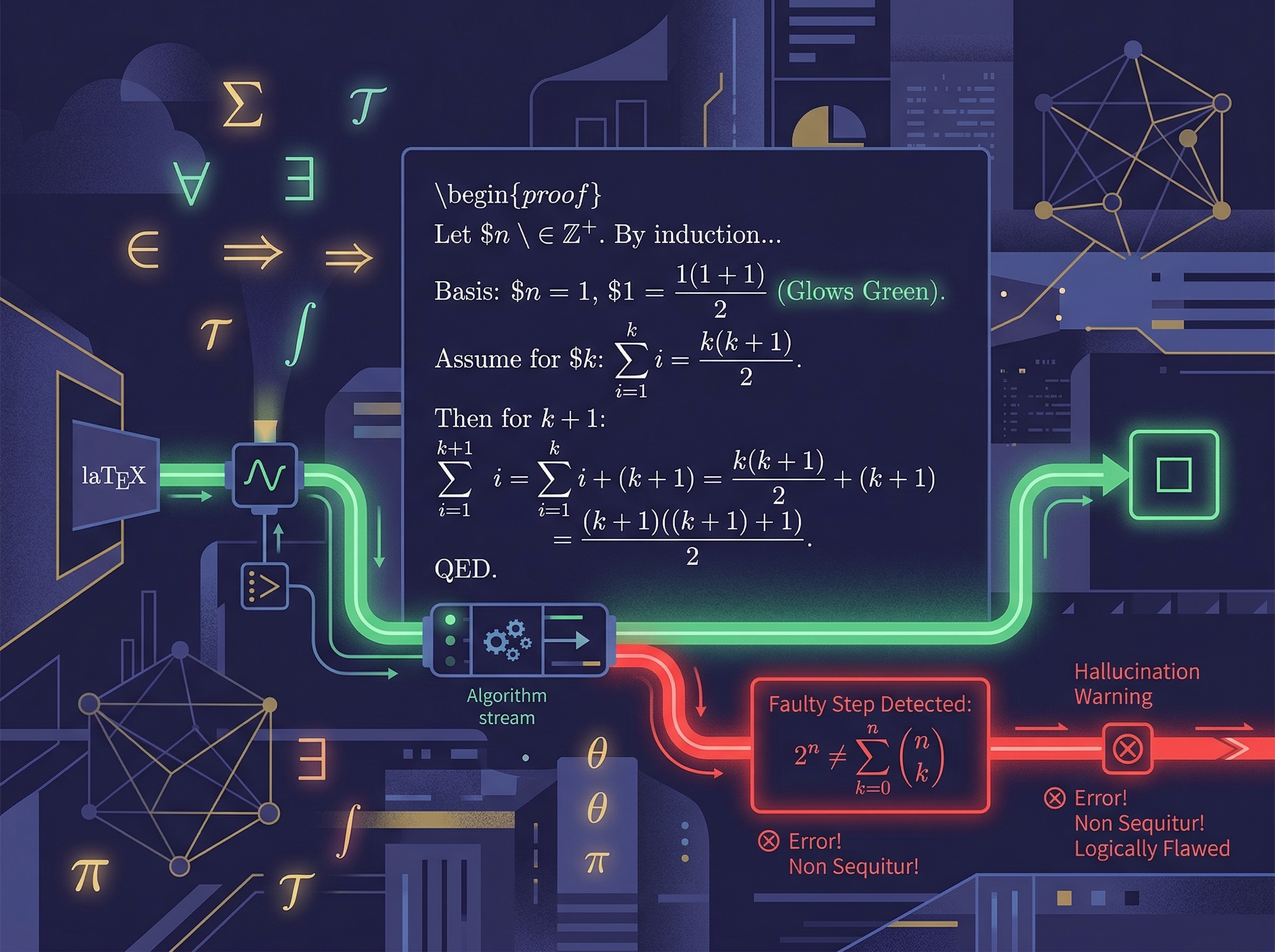

Here's a sentence that would have sounded insane five years ago: xAI's latest model uses a LaTeX compiler as a hallucination filter. Grok 4.2 "Fast" doesn't just generate mathematical answers—it compiles them internally, verifying that every equation actually typechecks before showing it to you. If the LaTeX breaks, the answer gets rejected and regenerated.

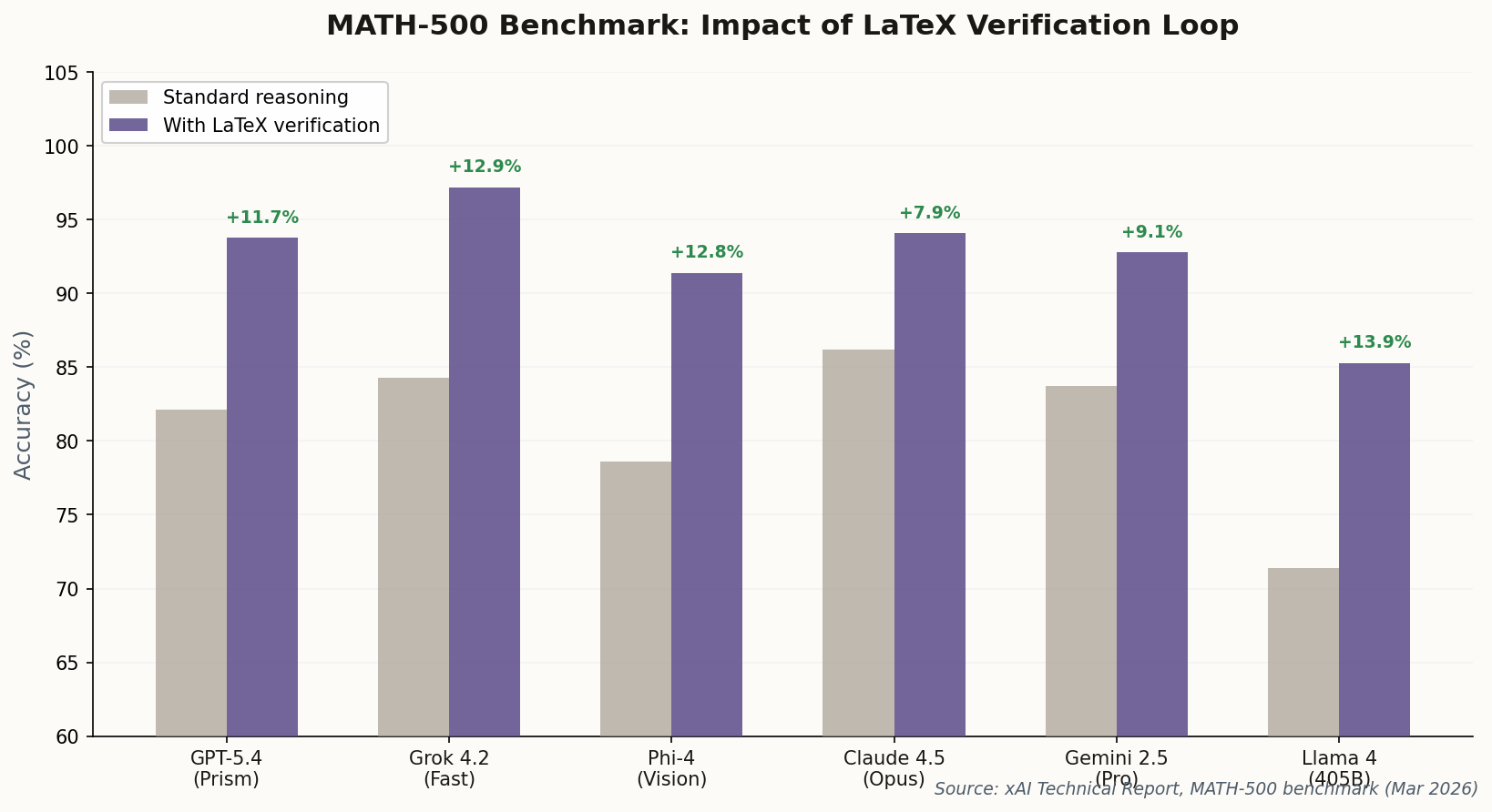

The numbers back up the audacity. On the MATH-500 benchmark, Grok 4.2 hit 97.2% accuracy—a figure xAI attributes directly to the LaTeX-verification loop. The secret is a 4-agent architecture where one agent writes, another compiles, a third checks logical consistency, and a fourth decides whether to ship or retry.

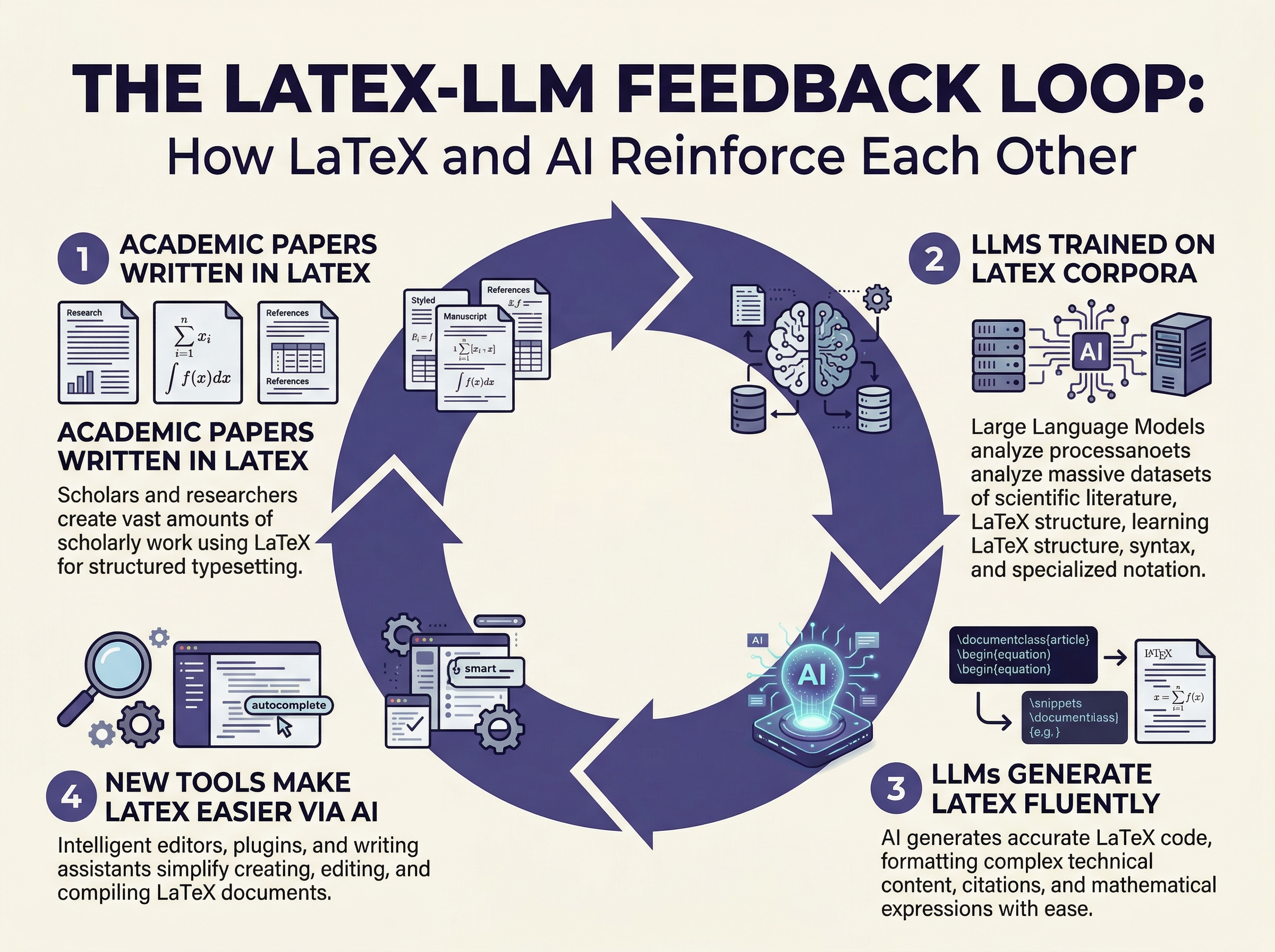

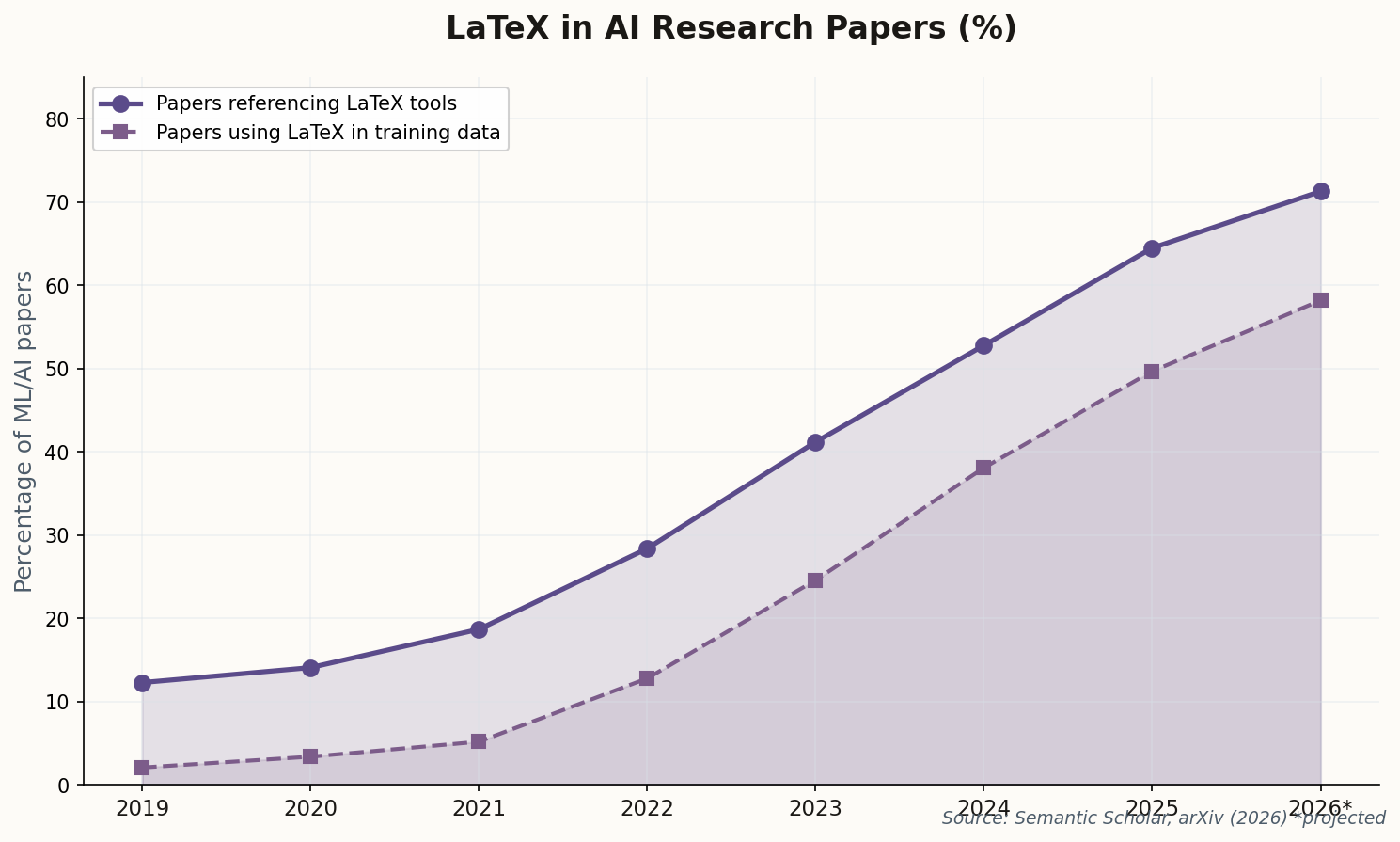

What's quietly revolutionary here isn't the accuracy itself—it's the philosophical shift. For years, the AI industry treated mathematical reasoning as a "scale will fix it" problem. xAI is saying: no, you need a formal verification layer, and LaTeX happens to be the most battle-tested one we have. The compiler isn't just formatting; it's a proof checker. Watch for every major lab to adopt some variant of this within six months.