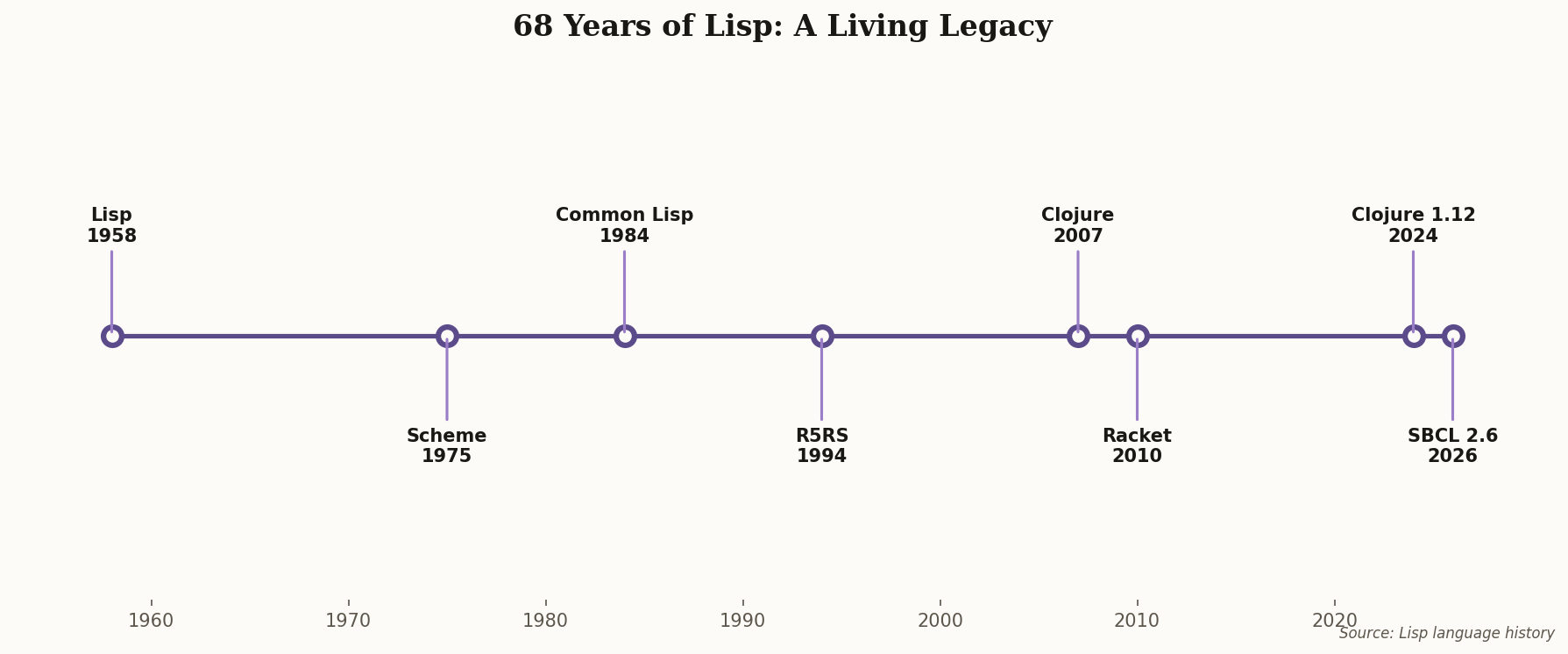

The 68-Year-Old Language That Just Shipped ARM64 Support

Here's a sentence that should make every "Lisp is dead" commentator feel slightly foolish: Steel Bank Common Lisp just shipped production-grade Windows ARM64 support in version 2.6.2. That's a programming language created in 1958 running natively on a Windows-ARM64 platform that barely existed five years ago.

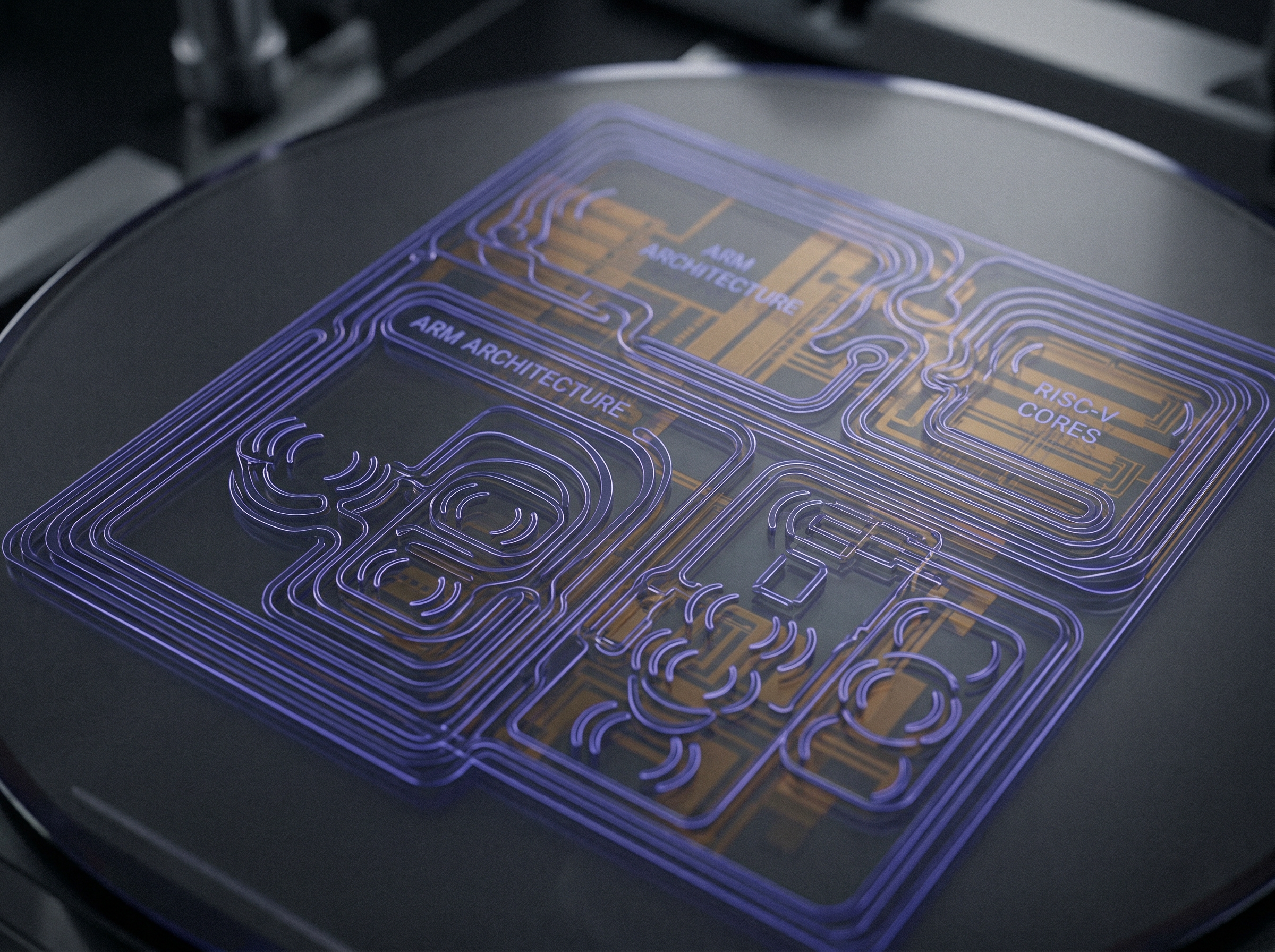

SBCL maintains a strict monthly release cycle — more disciplined than most VC-funded startups can manage for their SaaS products. The February release also includes critical optimizations for RISC-V and improvements to bignum arithmetic. While the JavaScript ecosystem churns through build tools like a teenager through streaming services, Common Lisp's flagship compiler quietly adapts to every new chip architecture that emerges.

When Apple shipped M-series silicon, SBCL was ready. When Qualcomm pushed Snapdragon Elite for PCs, SBCL adapted. The language that powered the first AI boom at MIT in the 1960s now runs on the chips powering the second one. That's not nostalgia — that's architectural resilience that most modern languages can only dream of.