A Joke Name for an Unkillable Tool

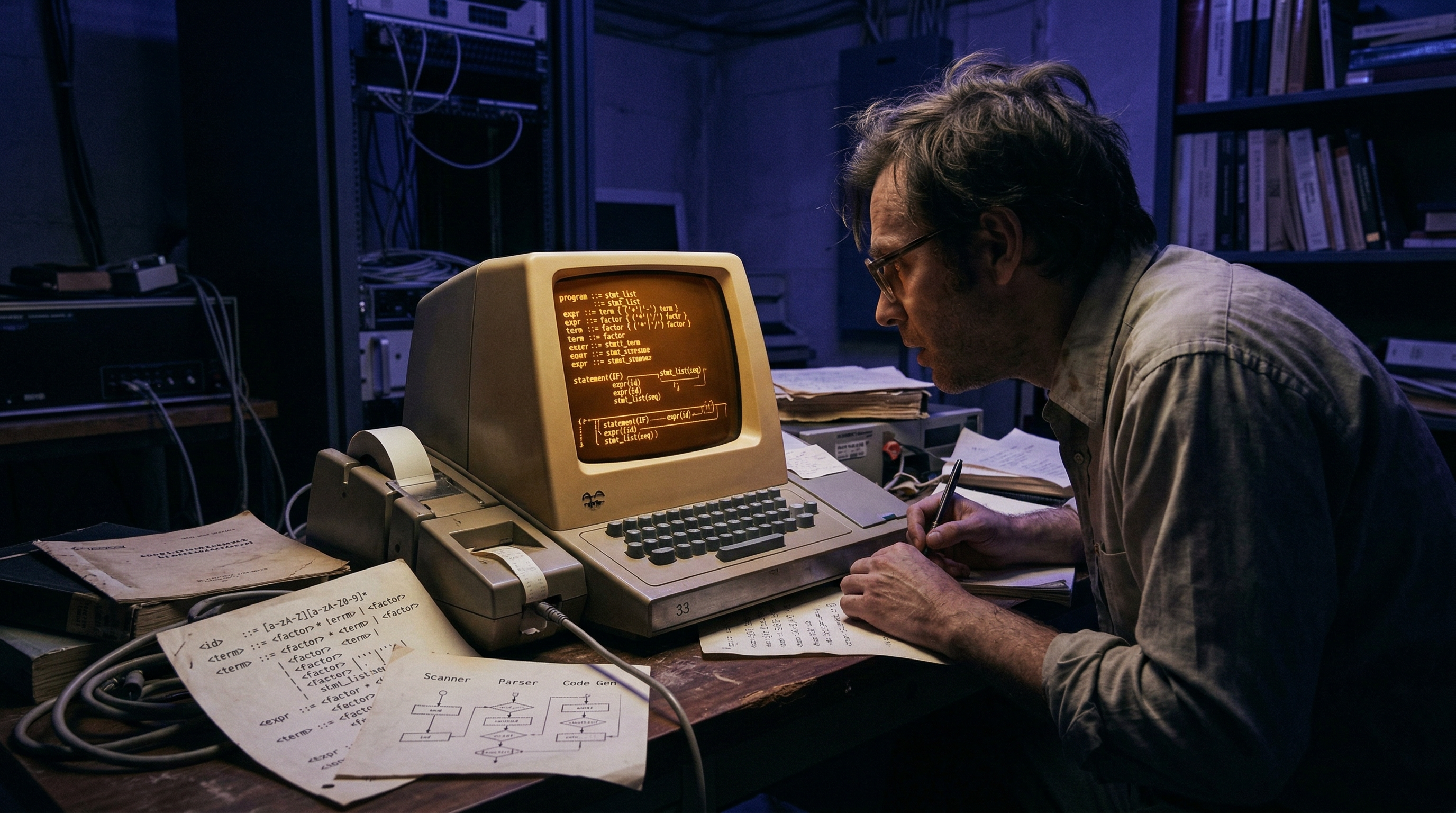

In 1973, Stephen C. Johnson at Bell Labs wanted to add an exclusive-or operator to the B programming language. A small request. But the existing compiler tools were so brittle that modifying the parser felt like performing surgery with a sledgehammer. So Johnson went to his colleague Alfred Aho, who pointed him toward Donald Knuth's dense theoretical work on LR parsing — and dared him to make it practical.

He did. The result was Yacc — "Yet Another Compiler-Compiler" — a self-deprecating name that stuck because, at Bell Labs, there was always another tool. Jeff Ullman reportedly quipped "Another compiler-compiler?" and the name became a permanent inside joke. Two years later, Mike Lesk (with help from a young intern named Eric Schmidt, who would later become CEO of Google) built Lex — a lexical analyzer designed to work in "close harmony" with Yacc. The pair became inseparable: Lex breaks raw text into tokens, Yacc assembles those tokens into meaning according to a formal grammar.

What made this pairing revolutionary wasn't the technology alone — it was the automation. Before Lex and Yacc, building a compiler was an artisan craft that took months or years. After them, a graduate student could define a grammar over lunch and have a working parser by dinner. They turned formal language theory from a blackboard exercise into a shipping product.

The Two-Phase Magic Trick

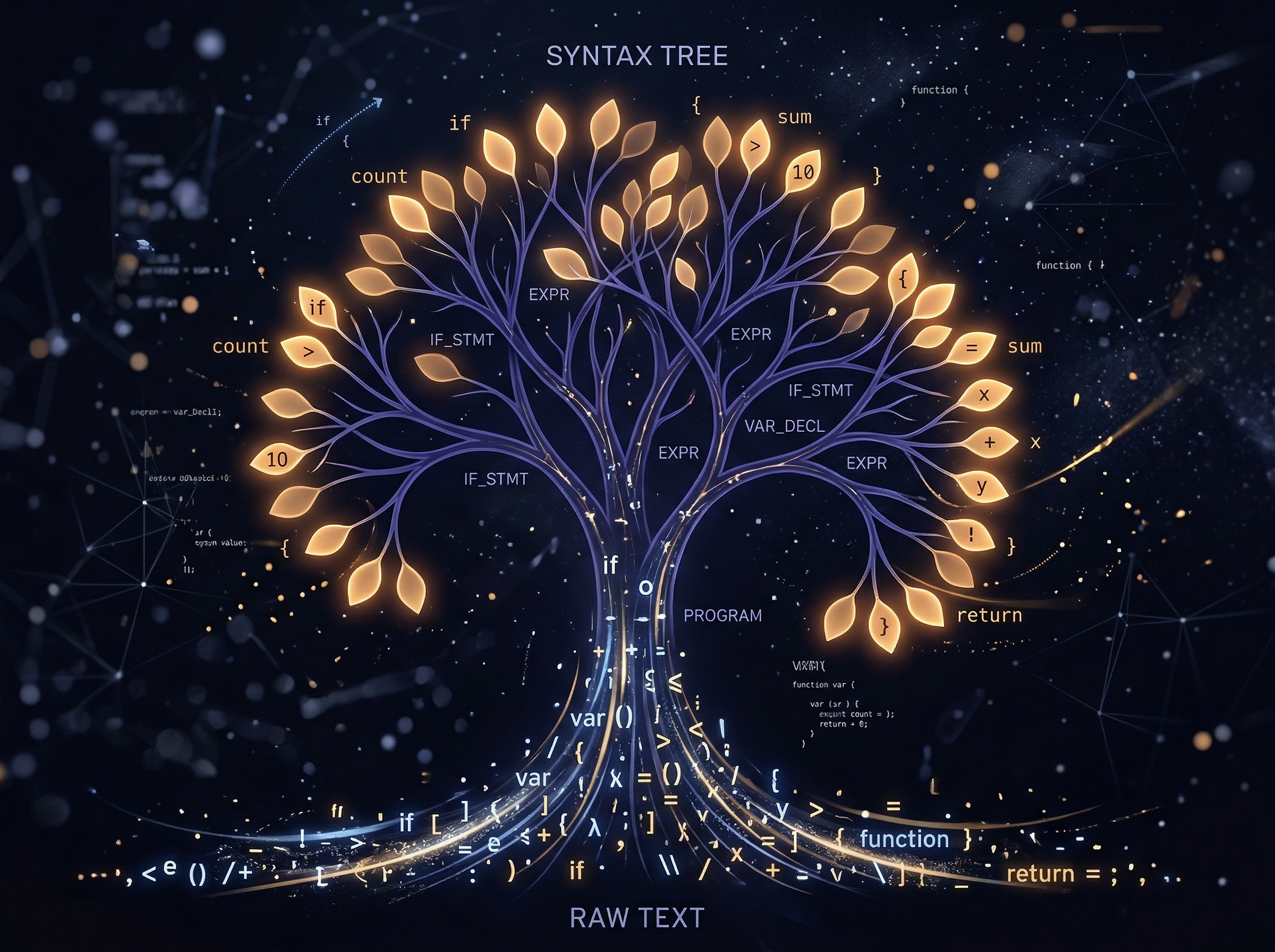

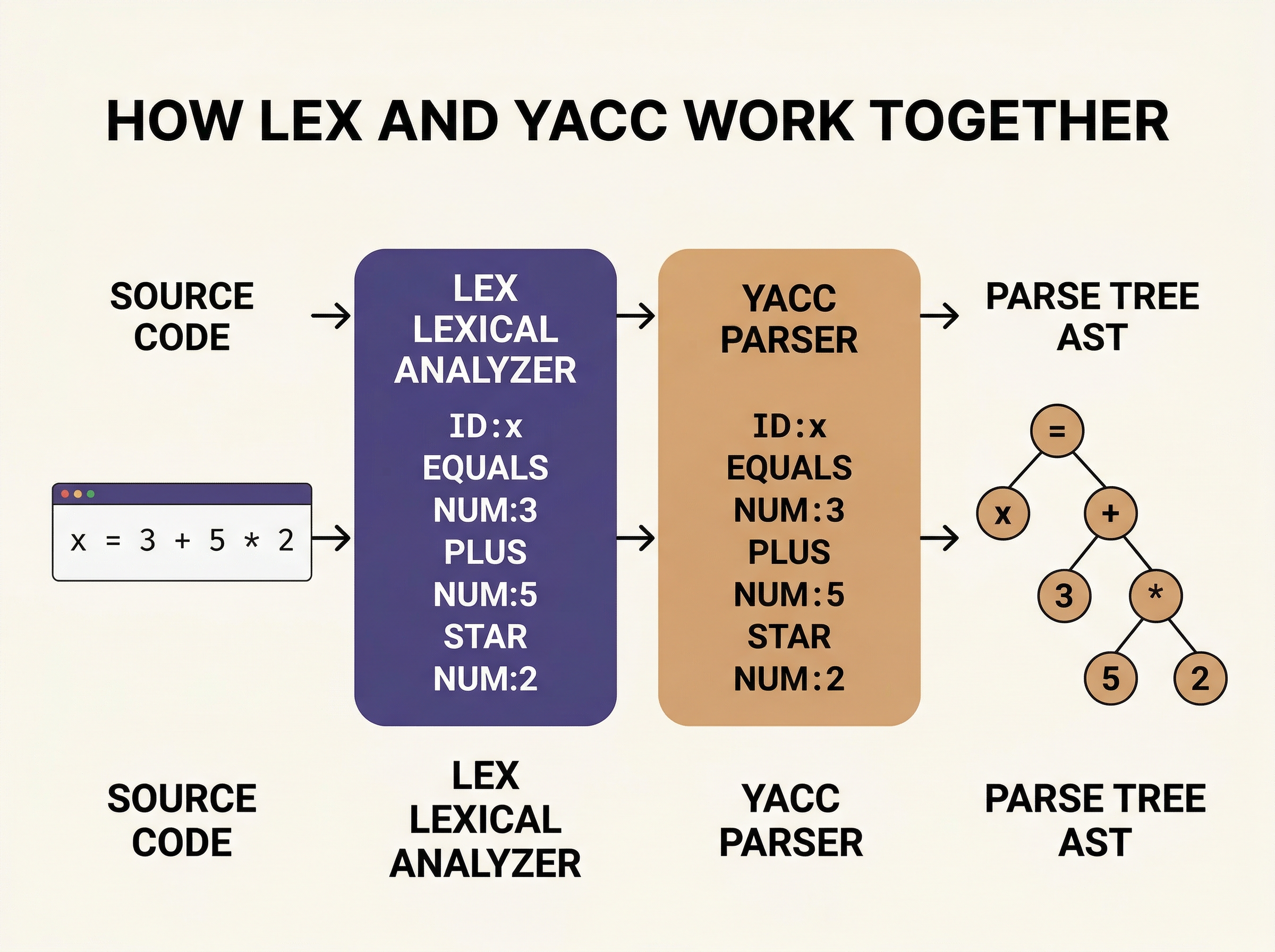

Every time your computer reads a line of code — any code, in any language — two things happen in sequence. First, the raw characters are scanned into tokens: the keywords, operators, numbers, and names that form the vocabulary. Then those tokens are arranged into a parse tree: a hierarchical structure that captures the meaning, the grammar, the actual intent of what you wrote.

This is exactly what Lex and Yacc do. Lex handles phase one: you give it a set of regular expressions (patterns like "one or more digits" or "the word while"), and it generates a Deterministic Finite Automaton — a blazing-fast state machine that scans input character by character, emitting tokens. Yacc handles phase two: you give it a formal grammar (a set of rules like "an expression is a number, or an expression plus an expression"), and it generates an LALR parser that builds the syntax tree.

Take the expression x = 3 + 5 * 2. Lex sees characters and emits tokens: [ID:x] [EQUALS] [NUM:3] [PLUS] [NUM:5] [STAR] [NUM:2]. Yacc takes those tokens and, following its grammar rules, builds a tree where multiplication binds tighter than addition — encoding the mathematical precedence you learned in grade school into executable structure. The beauty is that neither tool needs to know about the other's internals. Lex doesn't care about grammar. Yacc doesn't care about character patterns. Clean separation. Unix philosophy incarnate.

The Foundation Beneath Everything

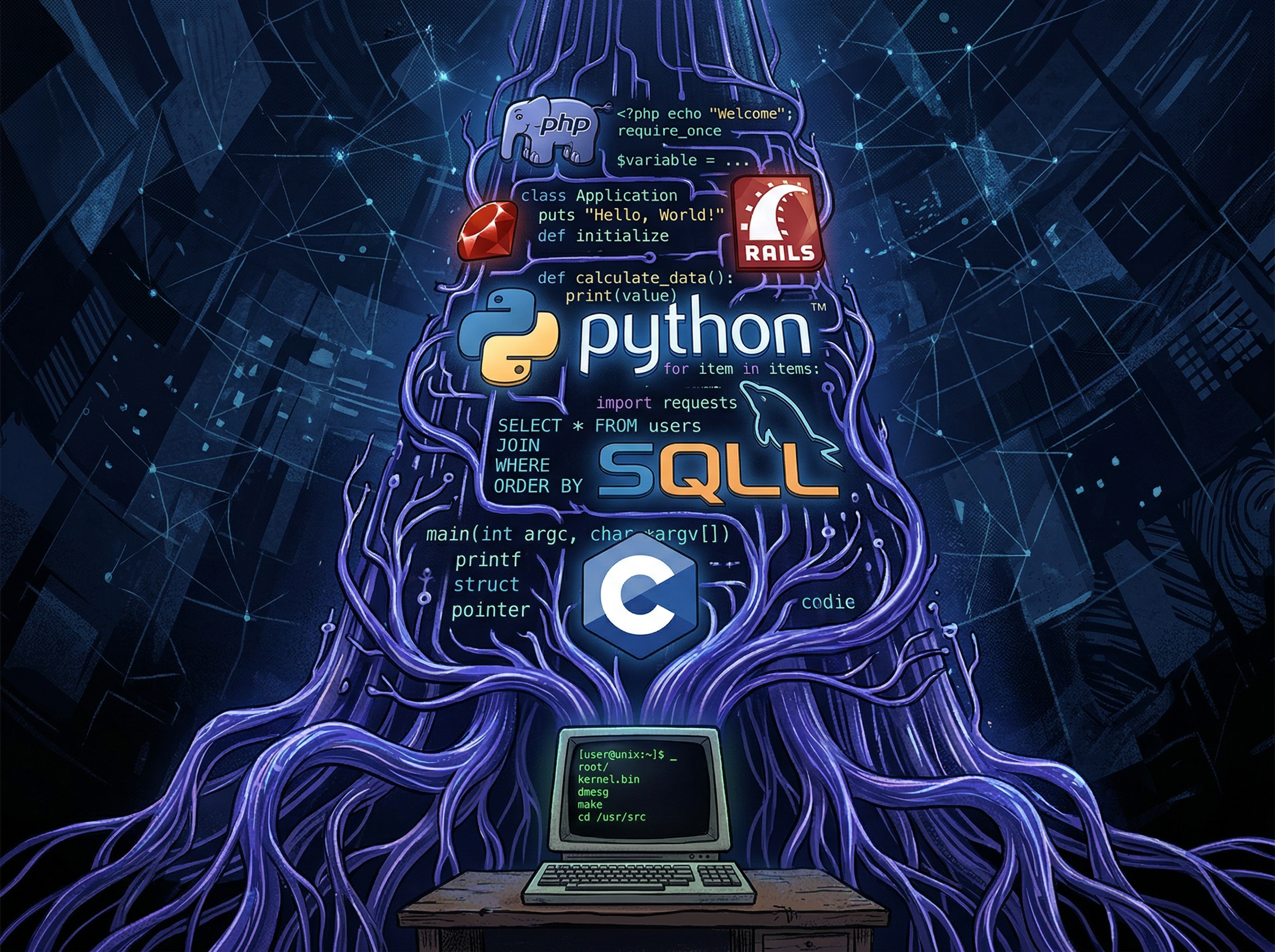

The list of software that wouldn't exist without Yacc (or its GNU successor Bison) reads like a who's-who of computing. It starts with the Portable C Compiler: Johnson used Yacc to build PCC in 1978, and because the parser was generated from a clean grammar, porting C to a new machine became a matter of swapping the code generator, not rewriting the language. PCC carried C — and Unix — to every computer architecture in existence.

Then the cascade. PostgreSQL still uses Flex and Bison for its SQL parser today — the grammar file gram.y defines everything from SELECT to window functions with mathematical precision. Ruby's parser is a legendary 10,000+ line Bison file called parse.y that pushes the tool to its absolute limits. PHP was transformed from a collection of "Personal Home Page" scripts into a real language when its creators rewrote it with a Yacc parser — the Zend Engine that now powers roughly 77% of websites still uses Bison at its core.

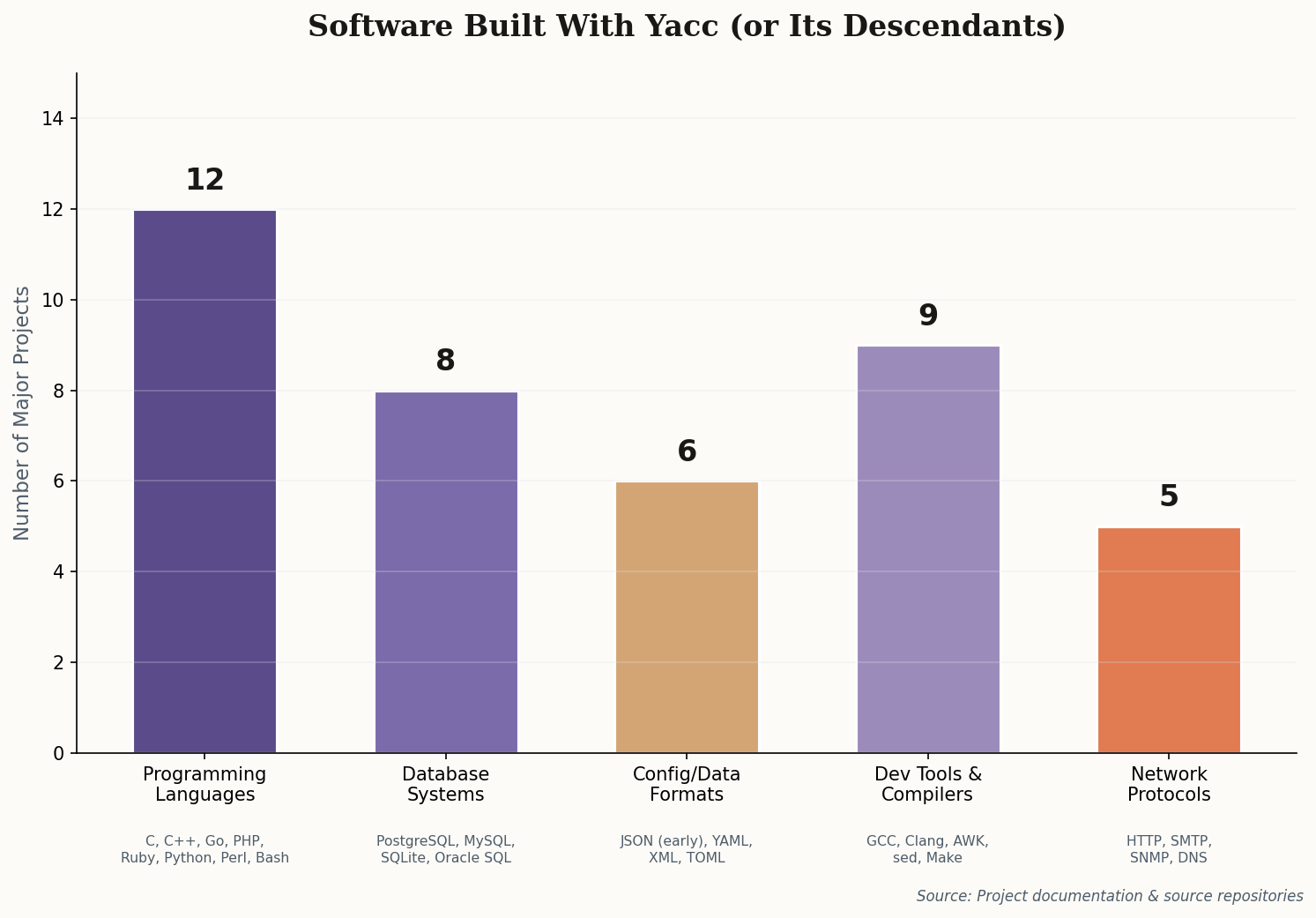

The numbers are staggering. At least 12 major programming languages, 8 database systems, 9 developer tools, and 5 network protocol parsers trace their lineage directly to Yacc's approach. That's not influence — that's infrastructure.

Tree-sitter and the Parser Renaissance

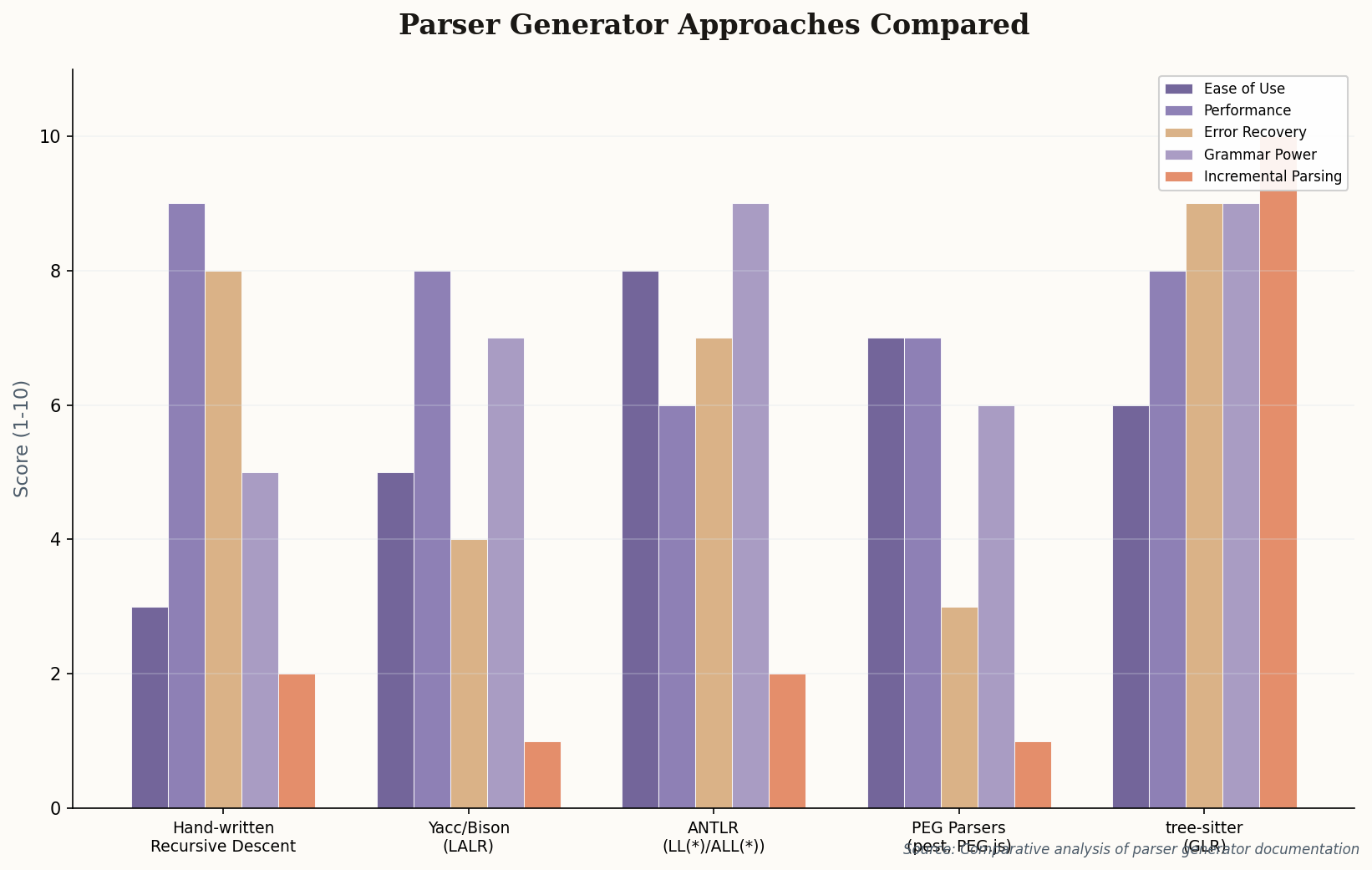

For decades, Yacc had a fatal flaw that everyone tolerated: it could only parse complete, syntactically correct input. That's fine for a compiler, which rejects broken code anyway. But it's useless for a text editor, which needs to understand your code while you're typing it — mid-expression, mid-thought, mid-typo.

Enter tree-sitter. Built by Max Brunsfeld at GitHub in 2018, tree-sitter takes the Lex/Yacc concept and solves the editor problem with two innovations: incremental parsing (re-analyze only the characters you just changed, not the whole file) and error recovery (gracefully handle the broken syntax that exists while you're mid-keystroke). It uses a GLR algorithm — a generalized version of the same LR parsing theory that Knuth invented and Johnson made practical in Yacc.

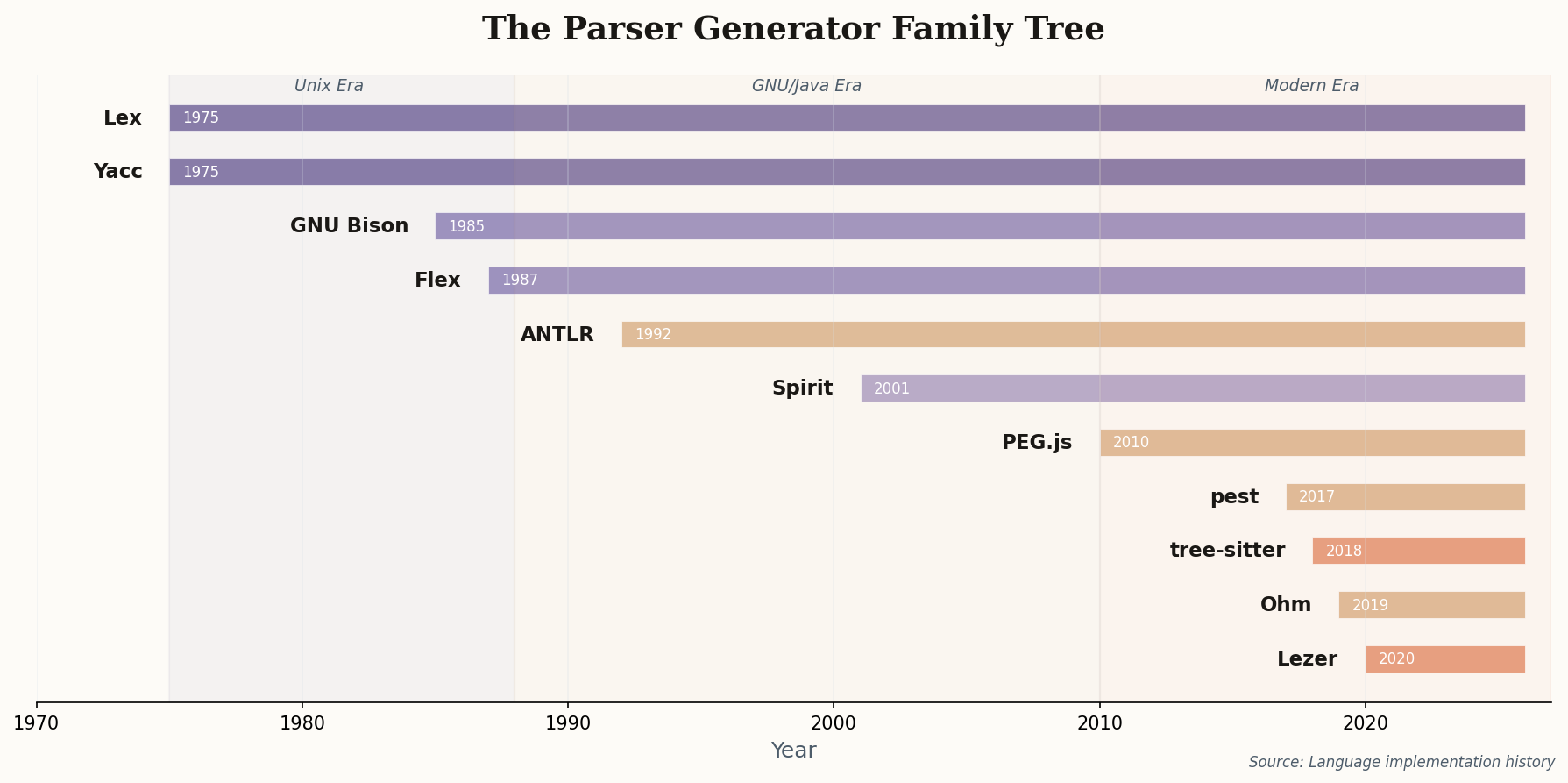

Today tree-sitter powers syntax highlighting in Neovim, Zed, GitHub's code navigation, and dozens of other tools. Alongside it, ANTLR dominates enterprise language processing, pest brings parser generators to Rust, and Lezer powers CodeMirror. The tools change, the algorithms evolve, but the core insight — define your grammar formally and let the machine build your parser — remains exactly what Johnson shipped in 1973.

16 Kilobytes and a Grammar File

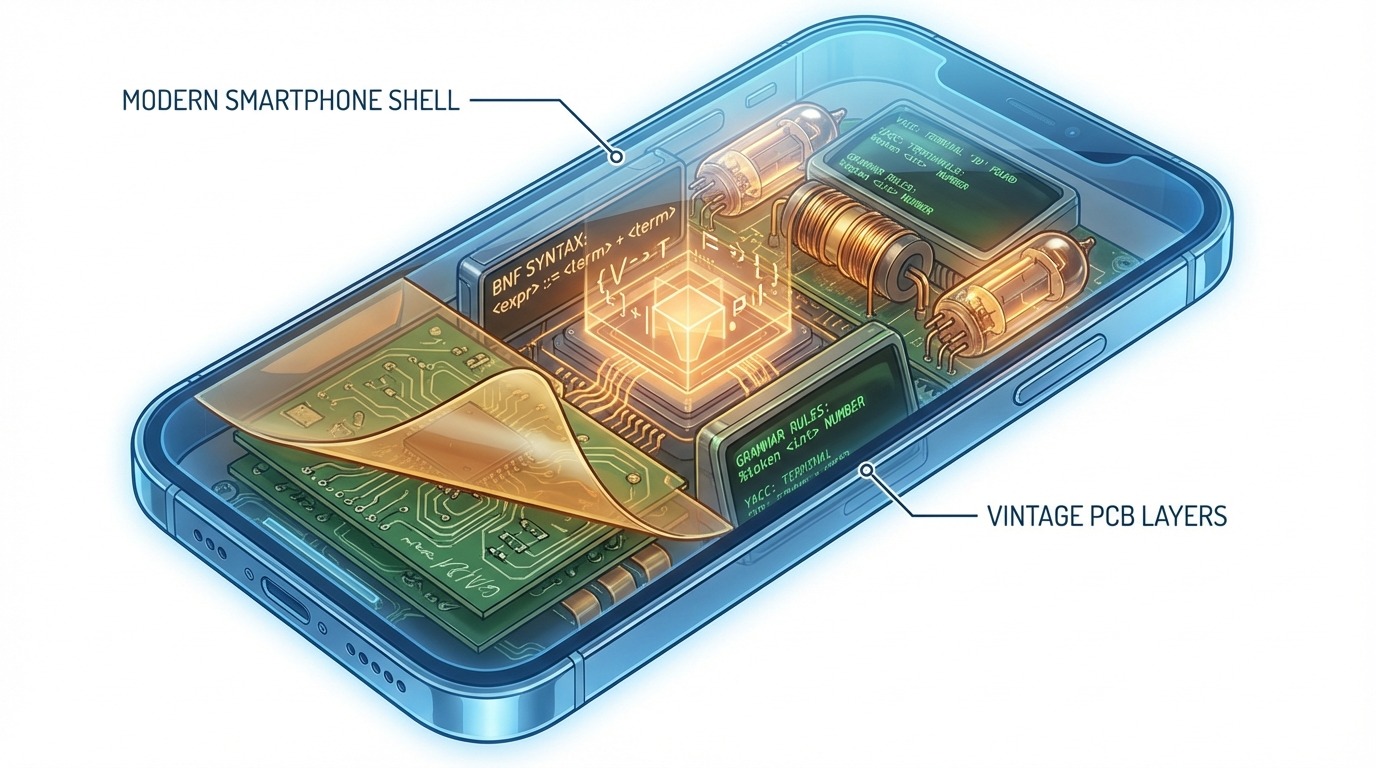

Here's where the story gets strange. While Silicon Valley chases the next framework, Lex and Yacc — the originals, not the successors — are still running in places most software engineers will never see. Oil exploration rigs use them to parse well-log data: complex streams from borehole instruments that need to be decoded in real-time on hardware with kilobytes, not gigabytes, of memory. Embedded medical device firmware uses them for protocol parsing where a bug doesn't mean a 500 error — it means a device failure in an operating room.

The reason is deceptively simple: Lex and Yacc generate pure C code with zero dependencies. No runtime. No garbage collector. No standard library beyond stdio.h. The output compiles on any platform with a C compiler — from a 16KB microcontroller to a mainframe. Try that with a JSON parser written in Python.

The network equipment in your office probably runs a CLI parser built with Lex and Yacc. The Cisco IOS command line, Juniper's JUNOS, and dozens of telecom systems use them because when you're parsing configuration commands on a router that handles a million packets per second, you need a parser that's as close to bare metal as software gets. They aren't legacy. They're bedrock.

Yet Another Reason to Care

The name "Yet Another Compiler-Compiler" spawned a naming convention that infected all of computing. YAML (Yet Another Markup Language). Yahoo! (Yet Another Hierarchical Officious Oracle). YARN (Yet Another Resource Negotiator). The self-deprecating "yet another" became a cultural marker for the Unix hacker ethos: build tools, name them modestly, and let the work speak.

But the deeper legacy is educational. The Dragon Book — Compilers: Principles, Techniques, and Tools by Aho, Sethi, Ullman, and later Lam — used Lex and Yacc as its laboratory instruments. For 40 years, computer science students worldwide have built their first calculator, their first mini-language, their first taste of the Chomsky hierarchy using these tools. They are the bridge between pure mathematics and working software — the moment where automata theory stops being abstract and starts compiling.

The quiet truth: You've never used Lex and Yacc directly. But every programming language you've written in, every SQL query you've run, every config file you've parsed — somewhere in that stack, a grammar was defined and a parser was generated. The invisible engines are still running.