Where It All Began: Room 2C-644

Every language has an origin myth. C's is better than most, because it's actually true: two guys in a New Jersey office, annoyed that their computer couldn't run the operating system they wanted, decided to build both the OS and the language to write it in. At the same time.

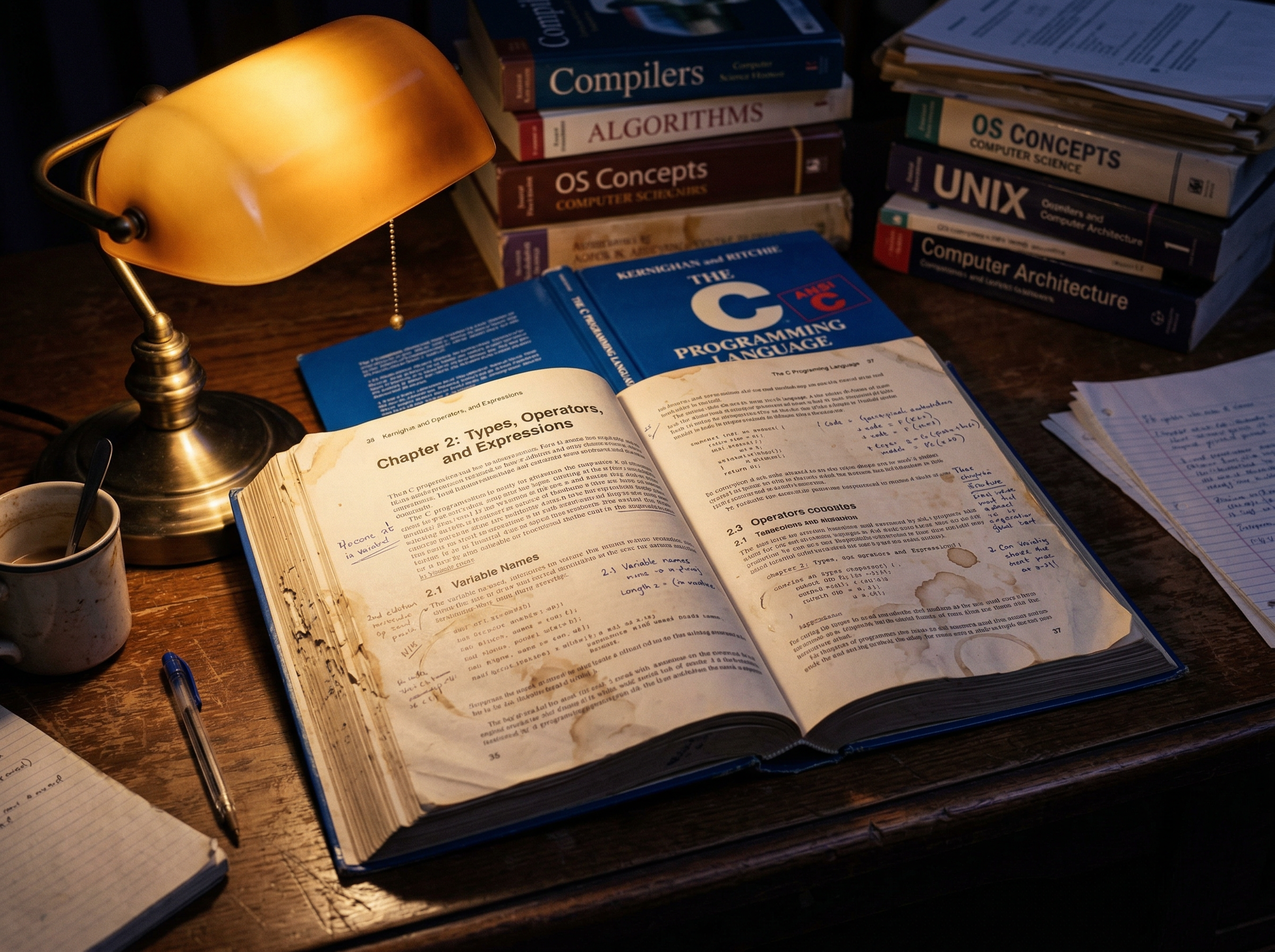

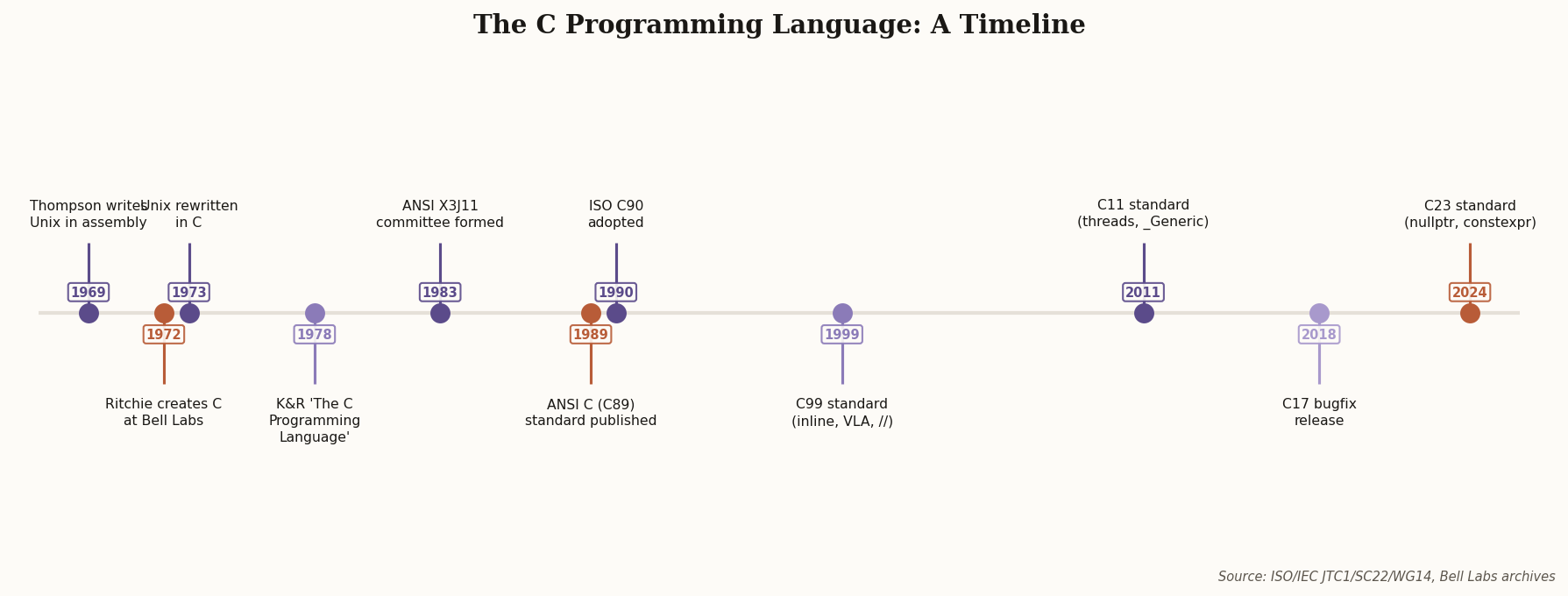

In 1969, Ken Thompson had written a stripped-down operating system for the PDP-7 using assembly language. He'd also created B, a typeless language descended from Martin Richards' BCPL. B worked fine on the word-oriented PDP-7. Then Bell Labs got a PDP-11, which was byte-addressable, and B's lack of types became a showstopper.

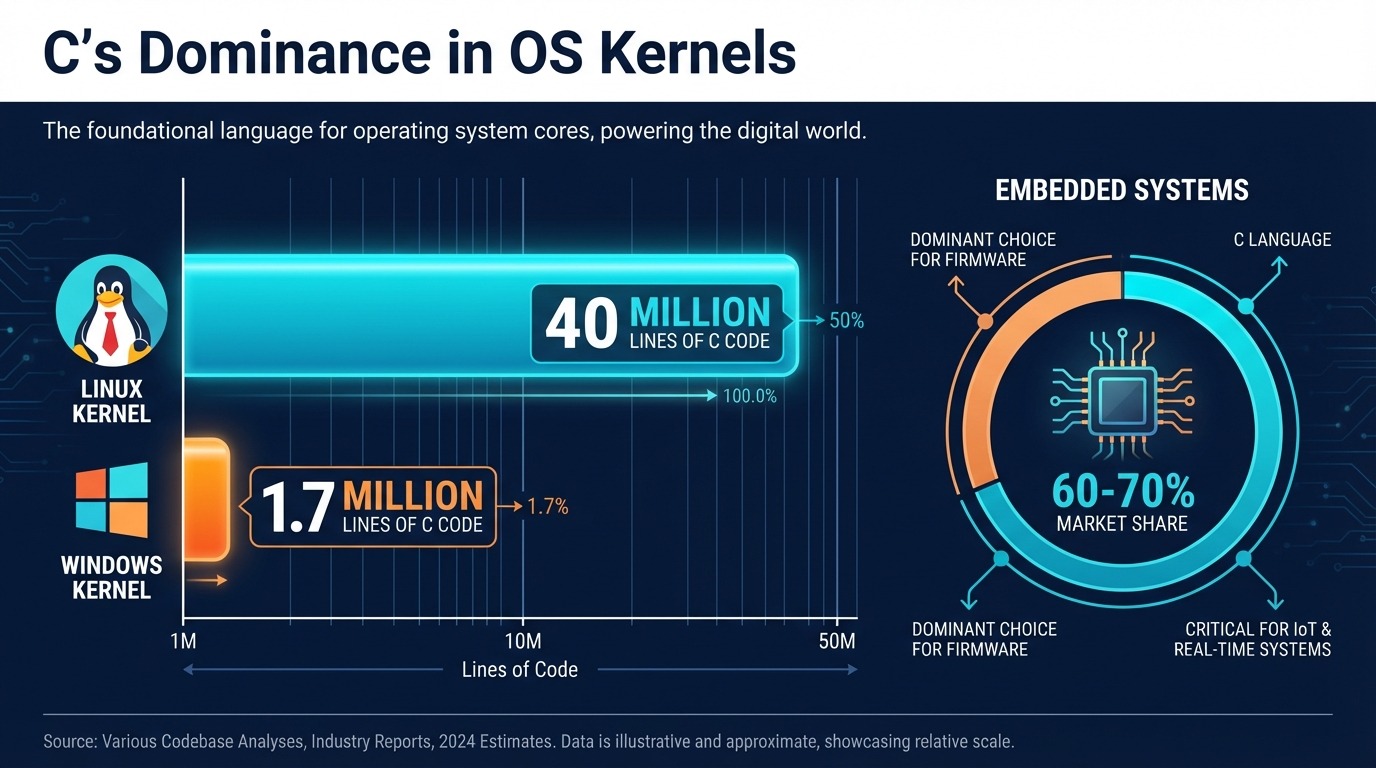

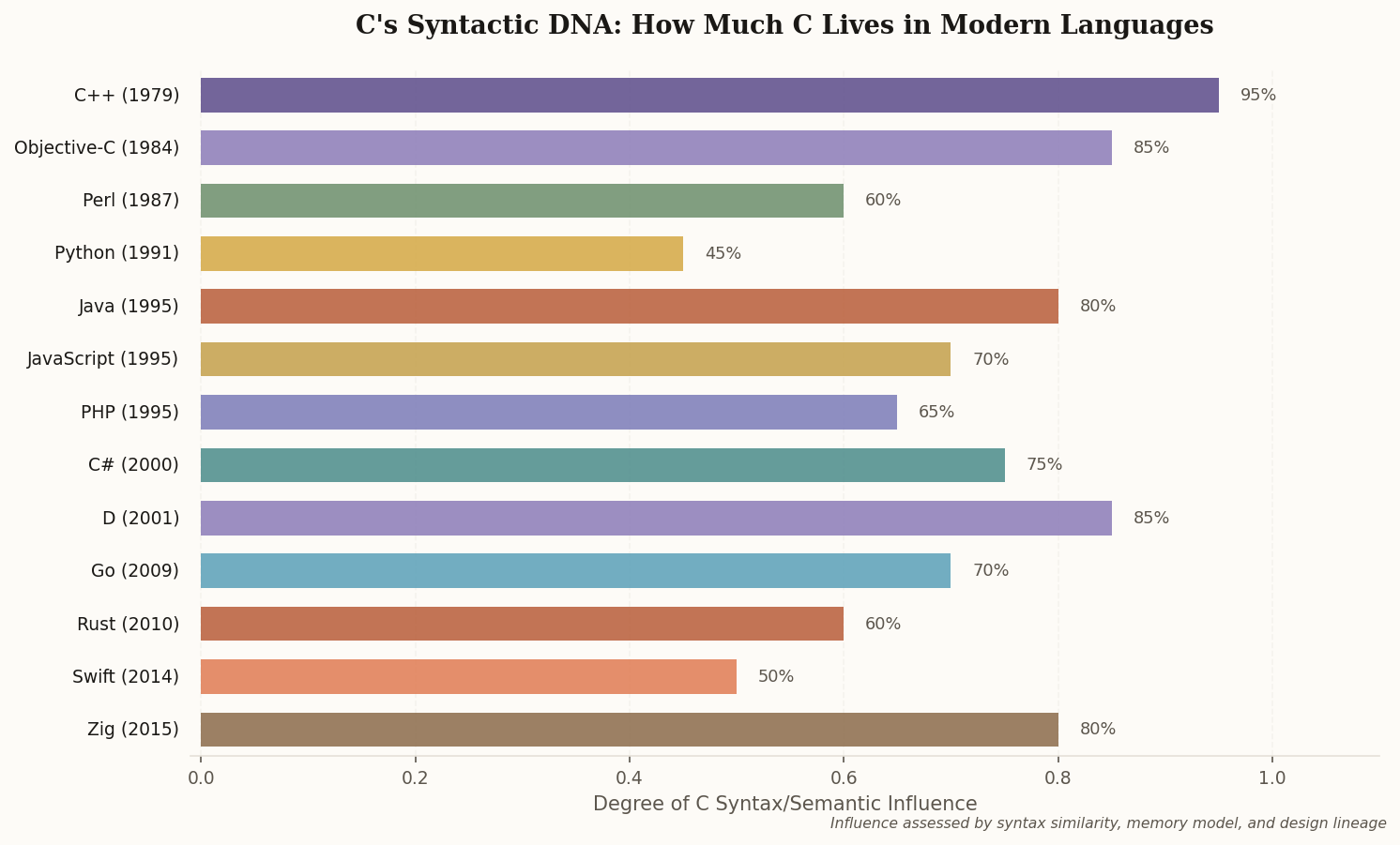

Dennis Ritchie took B and added what it needed: types. int, char, arrays, pointers, structures. The language passed through "New B" (NB) before becoming C around 1972. By 1973, Ritchie and Thompson had done something unprecedented: they rewrote the entire Unix kernel in C. Roughly 90% of the kernel was now in a high-level language, with only the hardware-critical 10% remaining in assembly.

"C is quirky, flawed, and an enormous success." — Dennis Ritchie

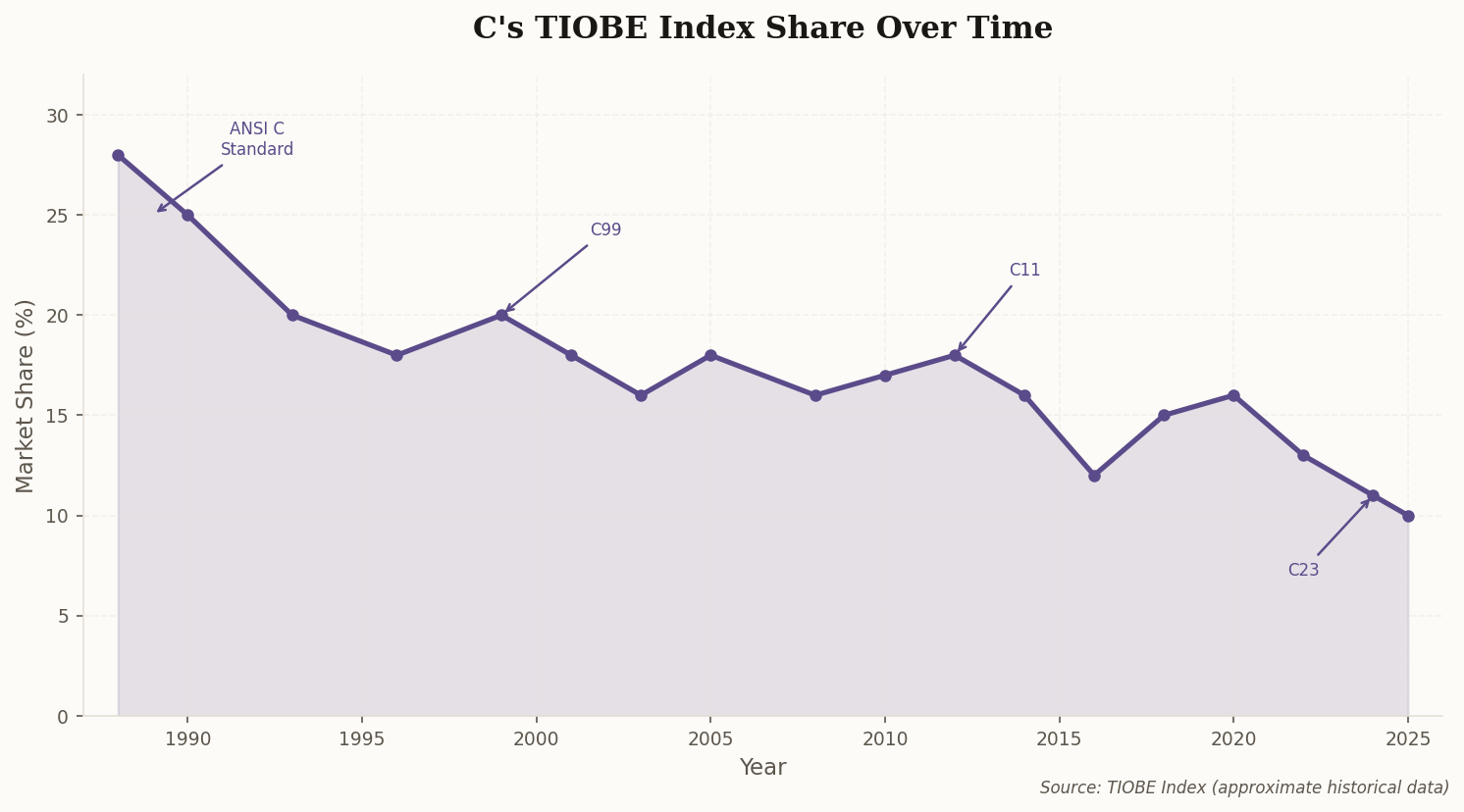

That decision—rewriting an operating system in a high-level language—was the moment that changed everything. It made Unix portable. And portable Unix meant C would spread to every machine that ran it.