The Term Nobody Wants Anymore

Here's the paradox of AGI in March 2026: the closer we get to something that acts like it, the less anyone wants to call it that. Leaders at OpenAI and Anthropic are now publicly distancing themselves from the term, calling it "marketing hypnosis" and "not super useful." That's a fascinating rhetorical pivot from companies that have spent billions pursuing exactly this thing.

What changed? The new wave of "agentic" models — OpenAI's GPT-5.3-Codex and Anthropic's Claude Opus 4.6 — don't just chat. They manage simulated businesses. They execute end-to-end software engineering without human intervention. They run for hours on complex tasks. If that's not general intelligence, it's doing a convincing impression.

The real story isn't whether these models are "AGI." It's that the industry is quietly abandoning the idea of a single arrival moment — no champagne-popping singularity announcement — in favor of a gradual integration story. AGI isn't coming. It's already seeping in through the cracks, one autonomous agent at a time. The question is whether anyone will notice the moment it tips from "useful tool" to "something else entirely."

Musk Wants AGI to Have Hands

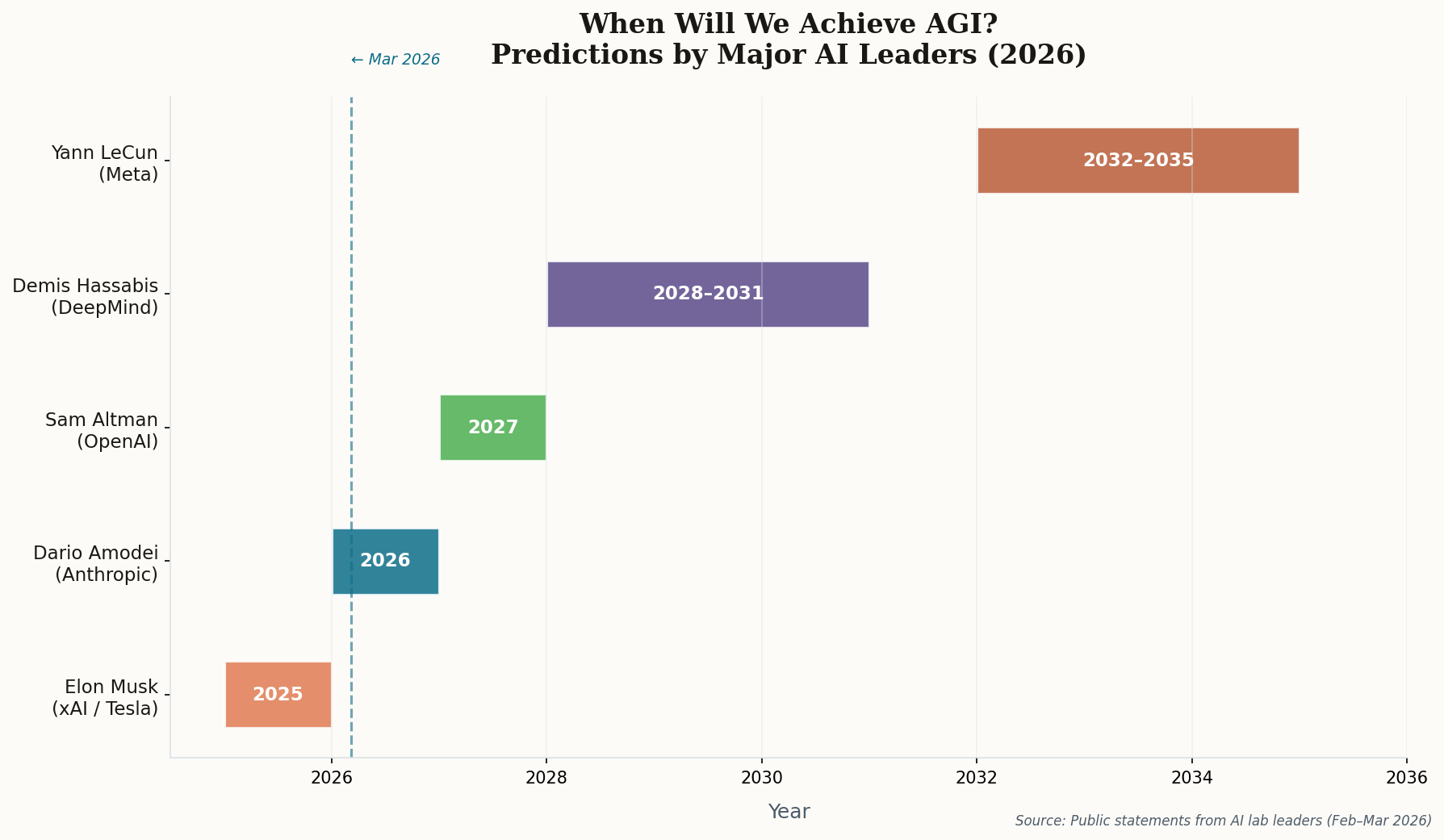

Elon Musk has declared 2026 the "Year of the Singularity" — which is either prescient or the most expensive marketing slogan in history. But his twist is genuinely interesting: he's arguing that Tesla, not xAI, will be the first to achieve AGI. The reasoning? True general intelligence requires a physical body that can interact with the real world.

It's a bold move to position a car company as the frontrunner in the AGI race. But Musk's logic has an internal consistency worth taking seriously: the Optimus humanoid robot program, powered by xAI's massive 1-million-GPU Colossus supercomputer, represents a convergence of physical manipulation and digital reasoning that no pure-software lab can match. If your definition of AGI includes "can fold laundry and fix a leaky faucet," then Musk might be right.

The counterargument writes itself: a robot that can navigate a warehouse isn't necessarily more "generally intelligent" than a model that can write a novel, diagnose a rare disease, and negotiate a contract. But Musk is doing something strategically smart here — he's expanding the definition of AGI to one where his companies have a structural advantage. Classic goalpost relocation.

Can a Judge Define Intelligence?

While Musk repositions Tesla as an AGI company with one hand, his lawyers are arguing with the other that OpenAI already built AGI — and needs to open-source it. The lawsuit demands a judicial determination that GPT-4o constitutes artificial general intelligence. If that sounds absurd, consider the stakes: a court ruling that GPT-4o is AGI would invalidate Microsoft's exclusive licensing agreement and could legally compel OpenAI to release its models to the public.

The most explosive detail? Musk's legal team cites unsealed internal documents suggesting OpenAI leadership and board members privately considered GPT-4o an AGI-level breakthrough. And they allege OpenAI quietly retired the model in February 2026 specifically to avoid triggering the contractual definition of AGI — which would end Microsoft's "pre-AGI" exclusive license.

The legal paradox: Musk is simultaneously arguing that AGI requires physical embodiment (Tesla/Optimus) and that a text-based chatbot (GPT-4o) already is AGI. The contradiction is the point — it highlights how commercially loaded the term has become.

Whether or not the lawsuit succeeds, it's forcing a question the industry has successfully dodged for years: who gets to define "general intelligence," and what happens to billion-dollar contracts when the definition actually matters?

Anthropic's Straight-Line Extrapolation Problem

Anthropic CEO Dario Amodei is making the simplest possible AGI prediction: draw a line through the last two years and extend it forward. AI models went from "undergraduate level" to "PhD level" in a single year. If that rate holds, human-level performance across "almost all cognitive tasks" arrives by late 2026 or early 2027.

The crucial qualifier — "if the current rate of capability increase holds" — is doing a lot of heavy lifting. Amodei acknowledges two potential brakes: data shortages (we're running out of high-quality training text) and geopolitical chip supply disruptions (the US-China semiconductor cold war is very real). But his framing implies these are engineering problems, not fundamental barriers.

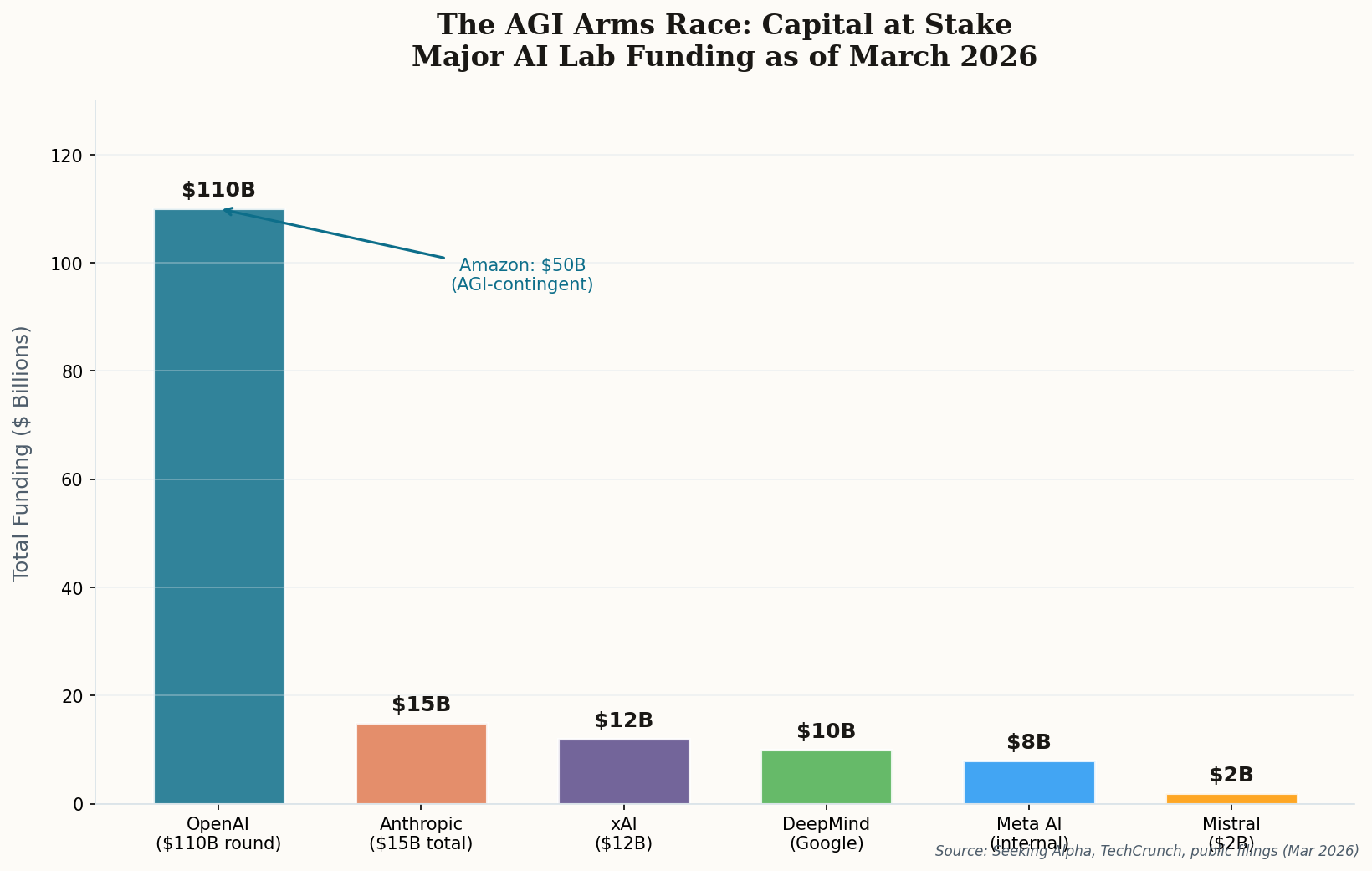

What Amodei isn't saying out loud but clearly believes: Anthropic is in the race, and they think they can get there. When the CEO of a company that's raised over $15 billion publicly predicts AGI within 18 months, that's not just a prediction — it's a fundraising narrative, a recruiting pitch, and a competitive positioning statement rolled into one. The question is whether the physics of scaling actually supports the straight line, or whether we're about to hit a plateau that turns that line into an S-curve.

$50 Billion Says AGI Is Real Enough to Bet On

Forget the philosophical debates. Amazon just made the most expensive bet in the history of artificial intelligence: $50 billion invested in OpenAI as part of a staggering $110 billion funding round. And here's the detail that should make every AI researcher sit up: $35 billion of that is contingent on OpenAI reaching a defined AGI technical milestone or launching an IPO.

This deal restructures the entire AI landscape. OpenAI committed to consuming 2 gigawatts of compute using Amazon's custom Trainium chips, and AWS becomes the exclusive third-party cloud provider for "OpenAI Frontier" — a new platform for persistent AI agents. Translation: Amazon is becoming the utility company for the AGI era, selling the picks and shovels regardless of who strikes gold.

The strategic implications run deep. OpenAI has historically been joined at the hip with Microsoft. This deal diversifies that dependency significantly. And the AGI-contingent structure creates a fascinating incentive: Amazon needs OpenAI to actually achieve AGI (or at least define it broadly enough to trigger the milestone) to protect its investment. When $35 billion hinges on a definition, the definition will get very creative.

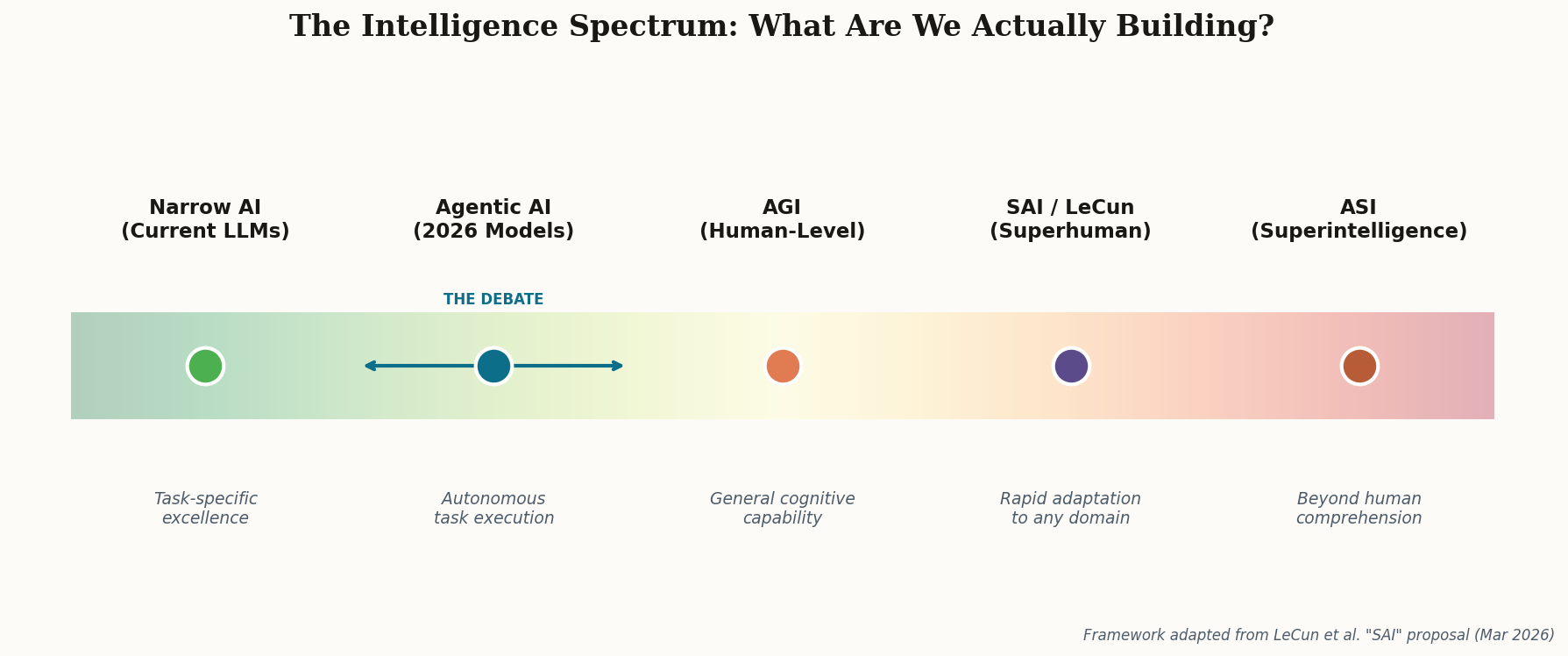

The Man Who Wants to Rename the Finish Line

Yann LeCun, Meta's Chief AI Scientist, has been the AI industry's most prominent AGI skeptic for years. Now he's gone a step further: proposing we scrap the term entirely and replace it with "Superhuman Adaptable Intelligence" (SAI). It's the most intellectual form of goalpost-moving imaginable — if you can't move the goalpost, just build a different field.

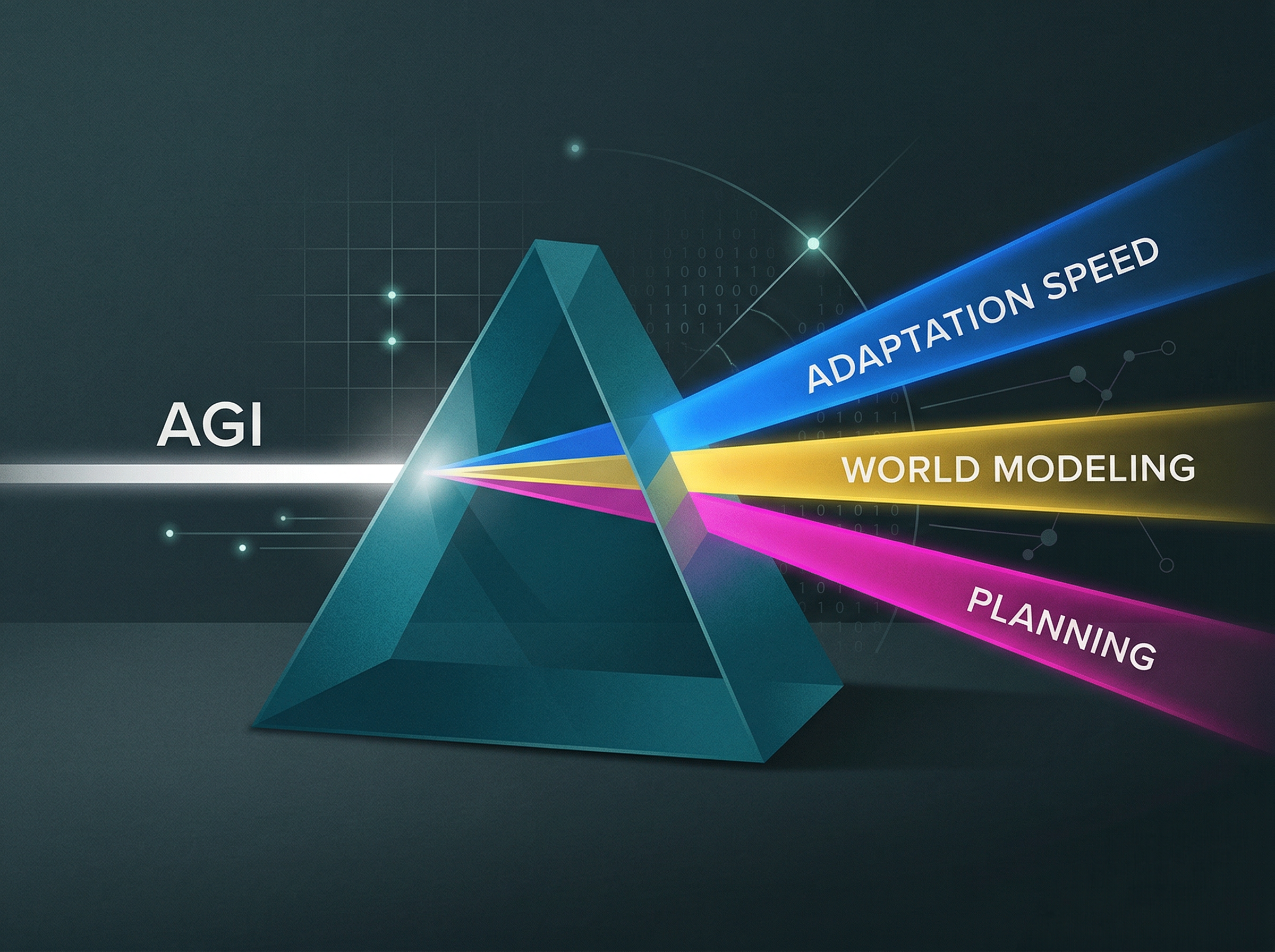

But LeCun's framework isn't just semantic gamesmanship. SAI focuses on adaptation speed — how quickly a system can learn a completely new task — rather than matching static human benchmarks. That's a genuine insight. Current models can ace the bar exam and write Python, but show them a task they've never seen before (say, playing a novel board game with rules explained once) and they fall apart. LeCun argues that this is the real test of intelligence, not data retrieval.

The timing is suspicious, though. As models approach human baselines on every standard test, the leading skeptic publishes a paper that formally moves the target. LeCun would say he's being rigorous. Critics would say he's protecting Meta's competitive position — if AGI doesn't exist yet, Meta's open-source approach loses nothing by giving models away. The truth, as usual, is probably both.

The Careful Optimist Accelerates

Demis Hassabis has historically been the most measured voice among major AI lab leaders. When everyone was predicting AGI by 2025, he was saying 2030 with a 50% probability. So when Hassabis tells the India AI Impact Summit that we're at a "threshold moment" and AGI is "likely within the next five years," pay attention — because this represents a significant acceleration from someone who has consistently been more conservative than his peers.

Hassabis maintains the highest bar for what counts as AGI among all the major lab heads: a system exhibiting all human cognitive capabilities, including genuine creativity and long-term planning. He still thinks that requires 1-2 more fundamental breakthroughs — not just scaling current architectures. This is where he splits from Amodei's "straight-line extrapolation" view.

But the shift in tone is unmistakable. DeepMind is opening fully automated research labs. Their Gemini system is autonomously designing experiments to discover new superconductors. When the cautious scientist starts sounding like the optimists, either he's seen something internally that changed his mind, or the competitive pressure to project confidence has finally overcome his natural restraint. Either way, the window is narrowing.