Enterprise Money Just Entered the Chat

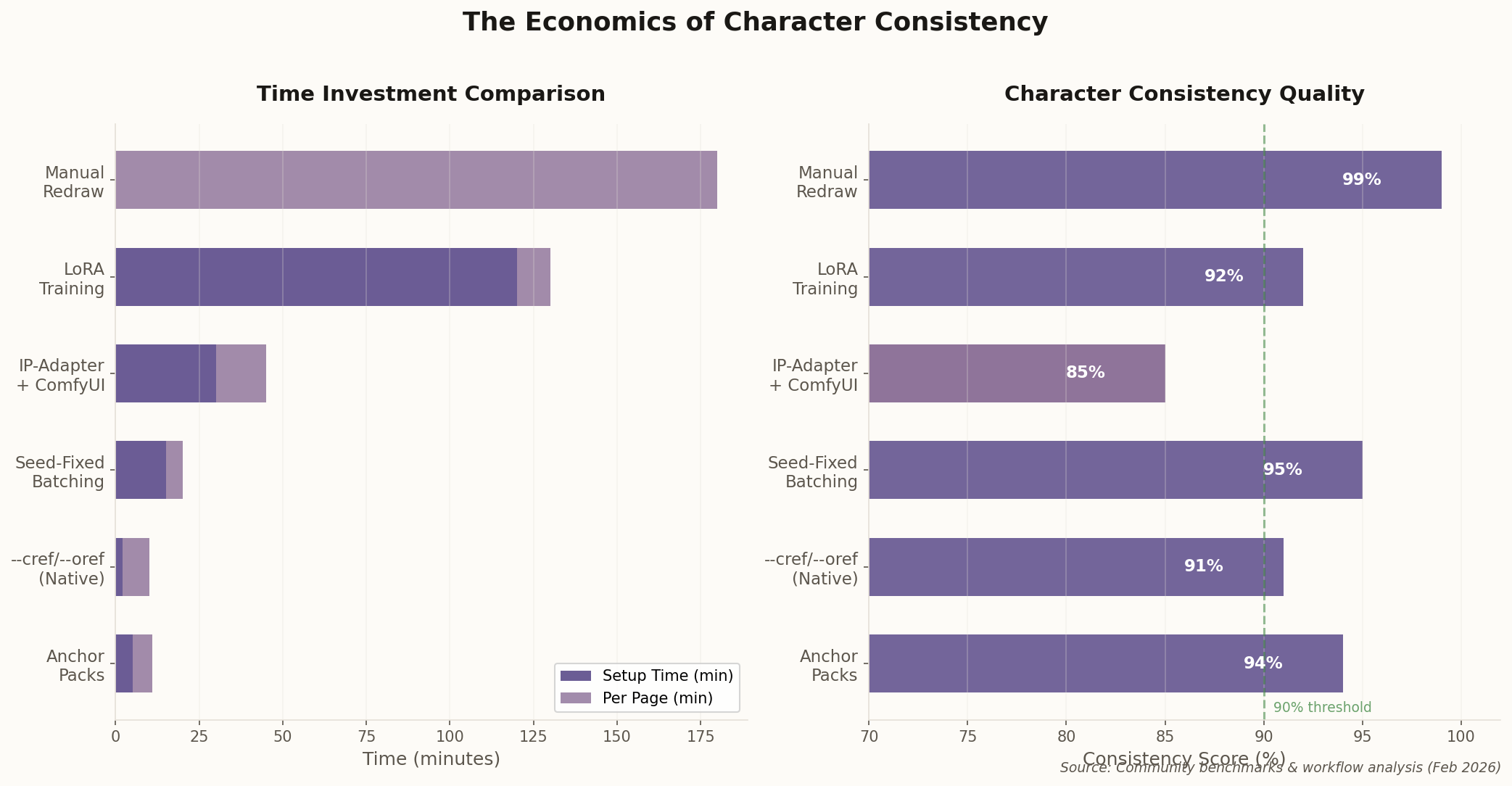

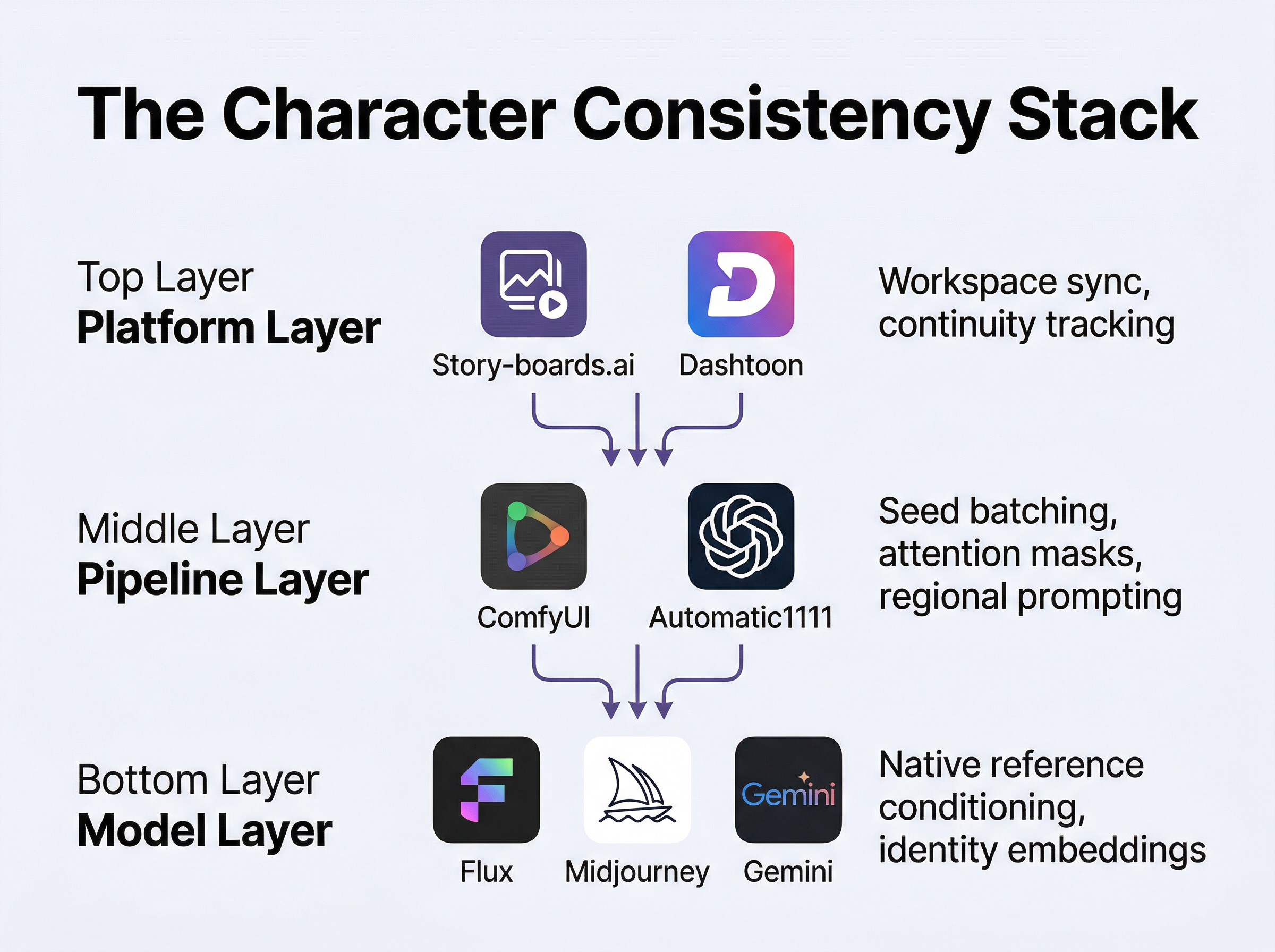

Here's how you know a technology has crossed from "toy" to "tool": somebody builds a SaaS platform around it and charges enterprise pricing. Story-boards.ai launched its Continuity Suite last week, and it's not aimed at weekend hobbyists. It's aimed at animation studios that burn 40% of their production hours fixing character inconsistencies between frames.

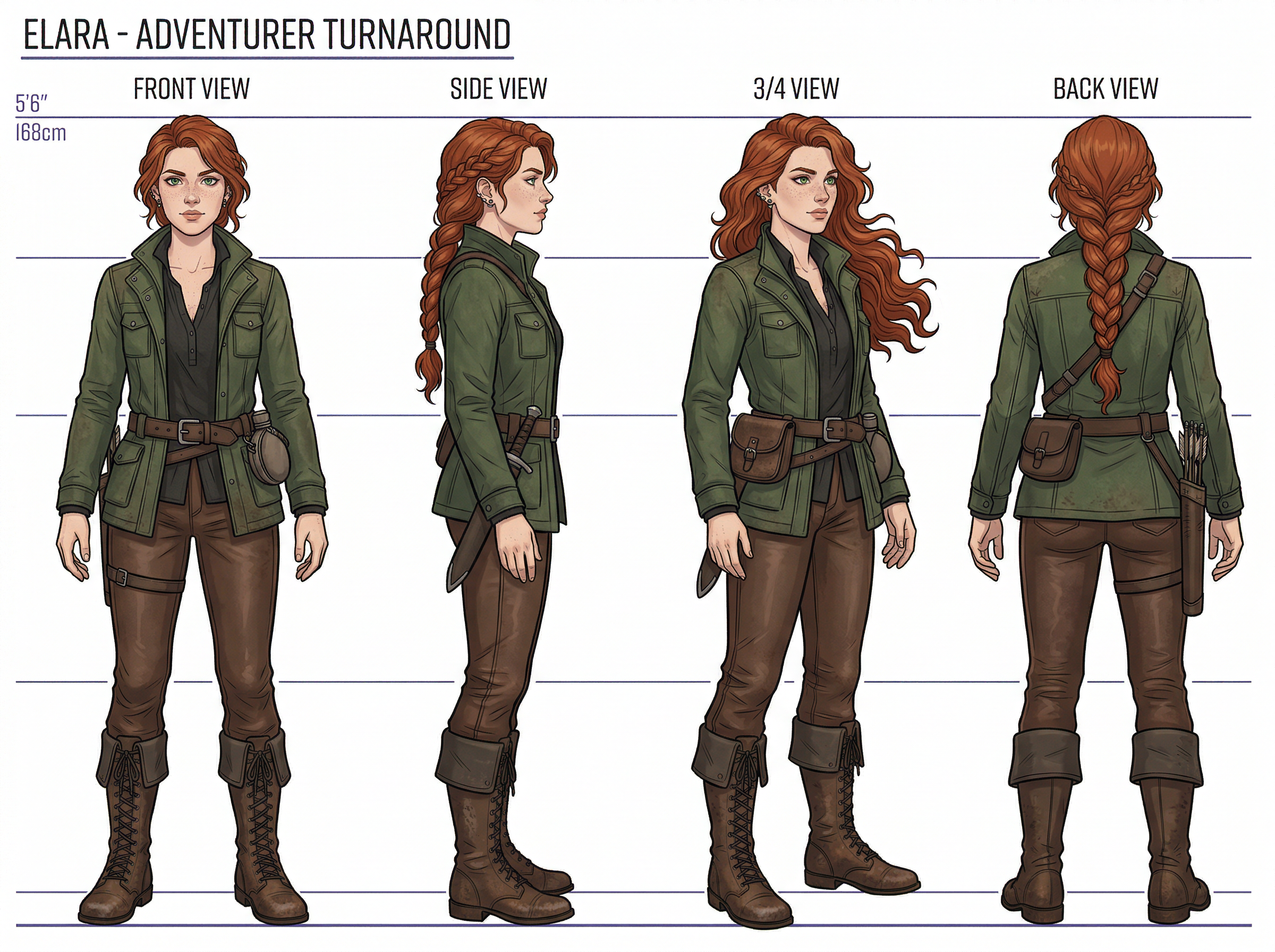

The pitch is straightforward. Upload a character design once. The platform syncs it across every workspace, every artist, every AI generation agent touching the project. Their internal testing claims a 70% reduction in redraw time for character-related errors. That's not a marginal improvement — that's eliminating an entire role from the pipeline.

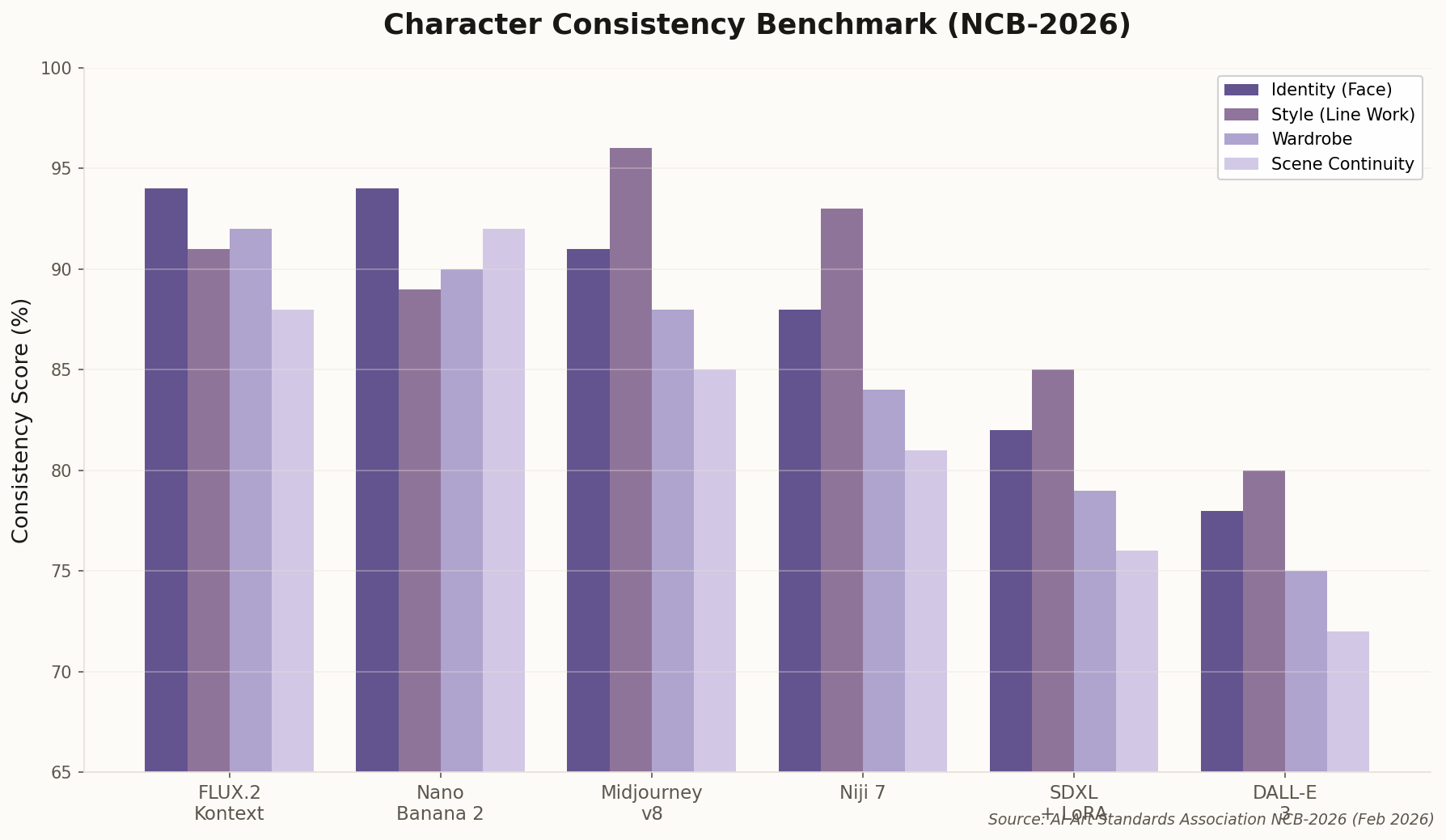

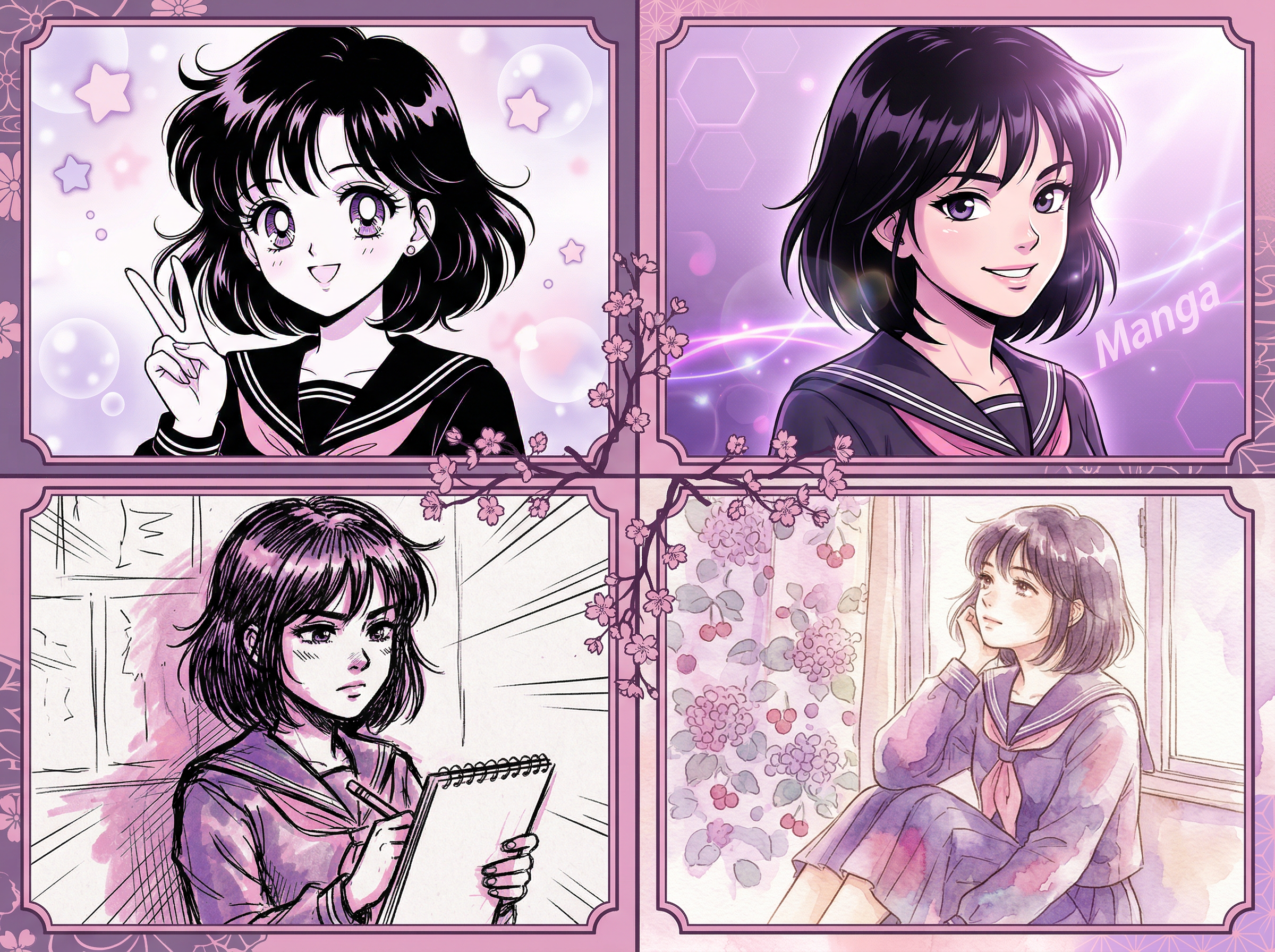

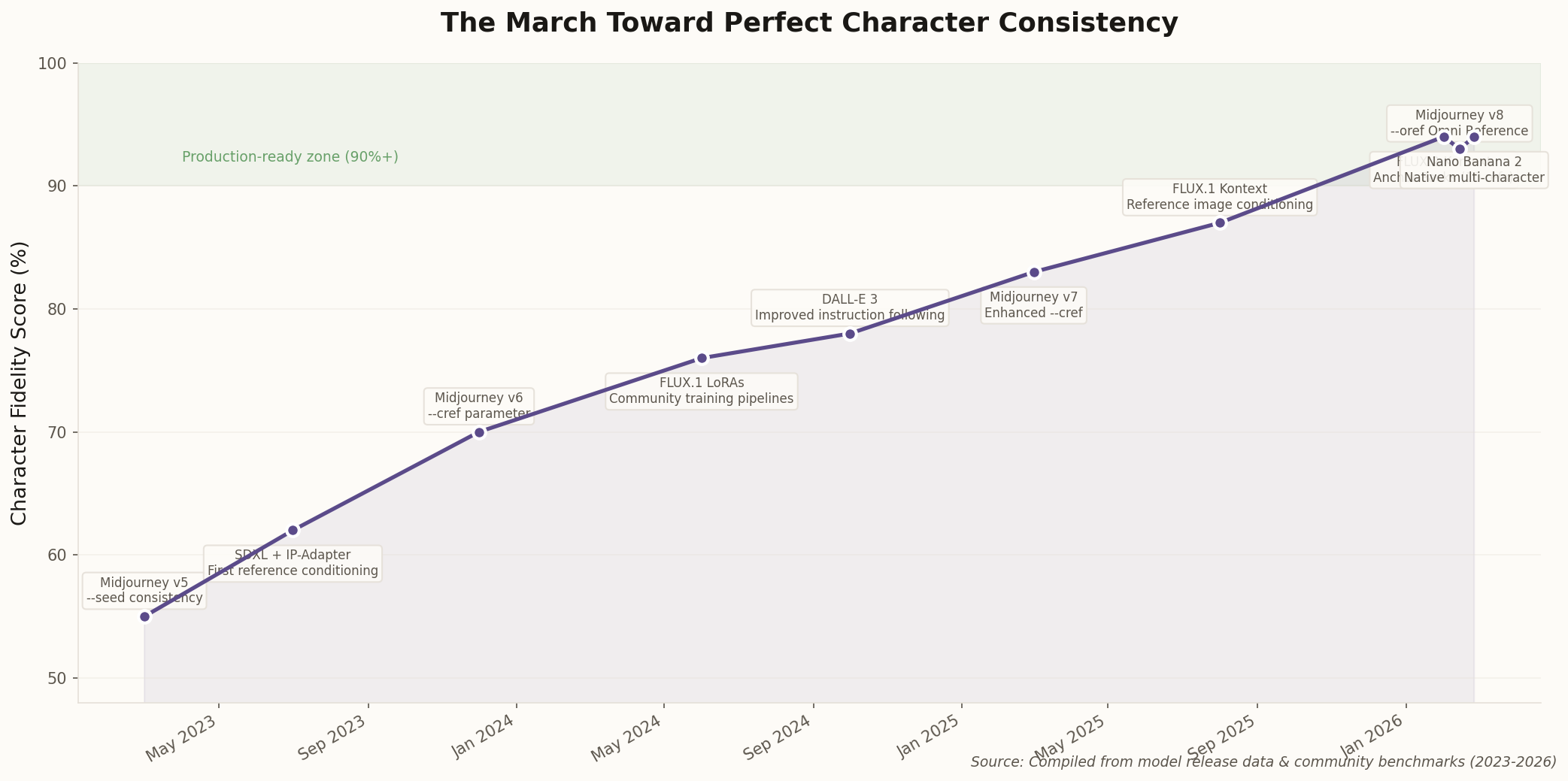

What makes this significant isn't the technology (which is essentially reference-image conditioning wrapped in a project management layer). It's the signal. When enterprise tools appear, it means the underlying capability is stable enough that businesses will bet their workflows on it. Two years ago, nobody would have shipped a continuity system for AI art because the models couldn't hold a face for three consecutive panels. Now it's a product category.

The real question isn't whether AI can maintain character consistency — it's whether traditional studios will adopt these tools fast enough to matter, or whether a new generation of solo creators will simply route around them entirely.