Your AI Wrote the Code. Who's Checking for Landmines?

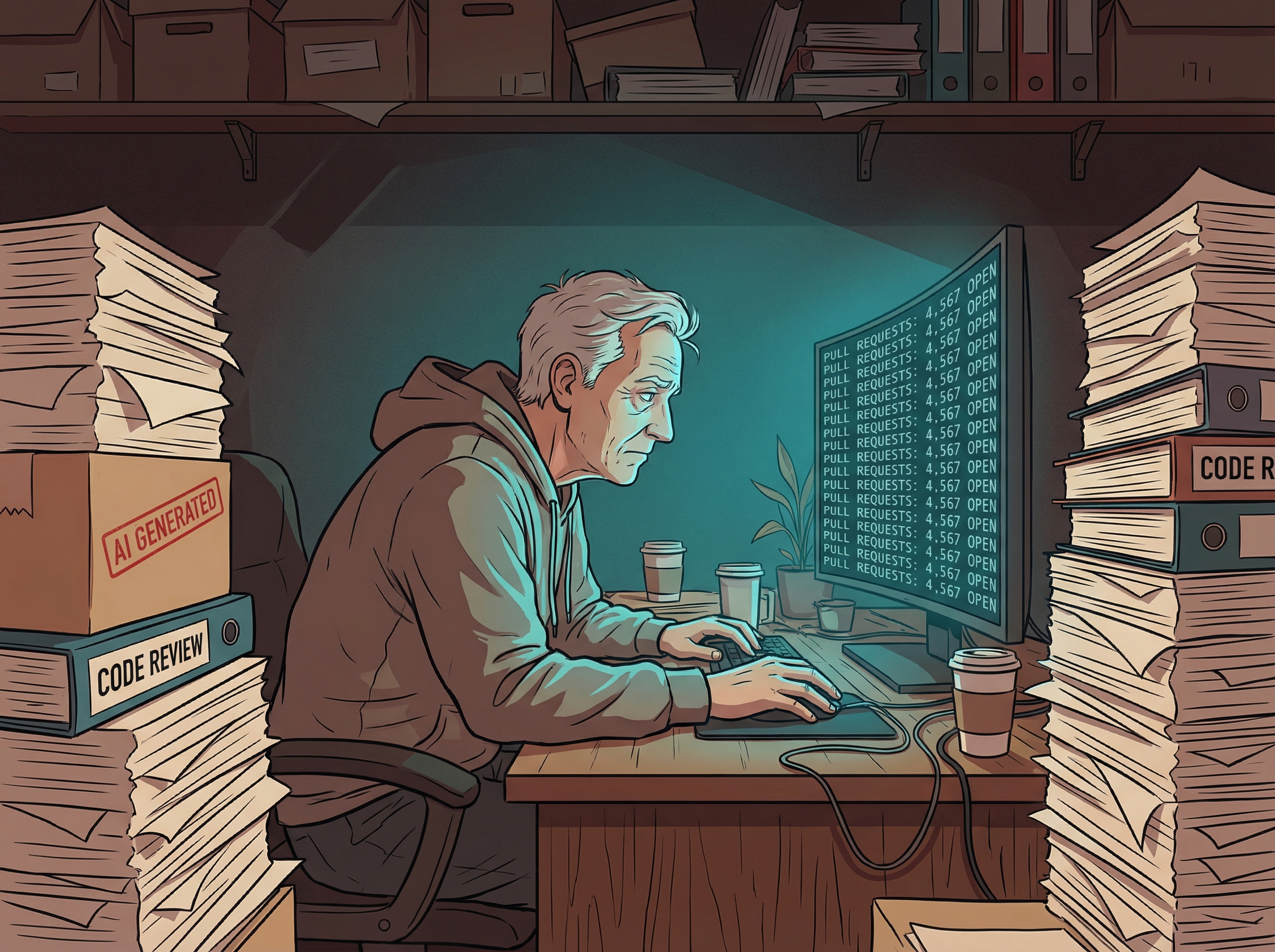

Anthropic just shipped something that tells you everything about where software engineering is headed: an AI security tool designed specifically to catch vulnerabilities introduced by AI code generation. Let that sink in. We've reached the stage where AI needs to check AI's homework.

Claude Code Security integrates directly into the coding workflow and "reasons through code like a security researcher," scanning for the specific class of bugs that LLMs love to produce—the ones that compile cleanly, pass tests, and quietly open your database to the world. It even suggests automated patches.

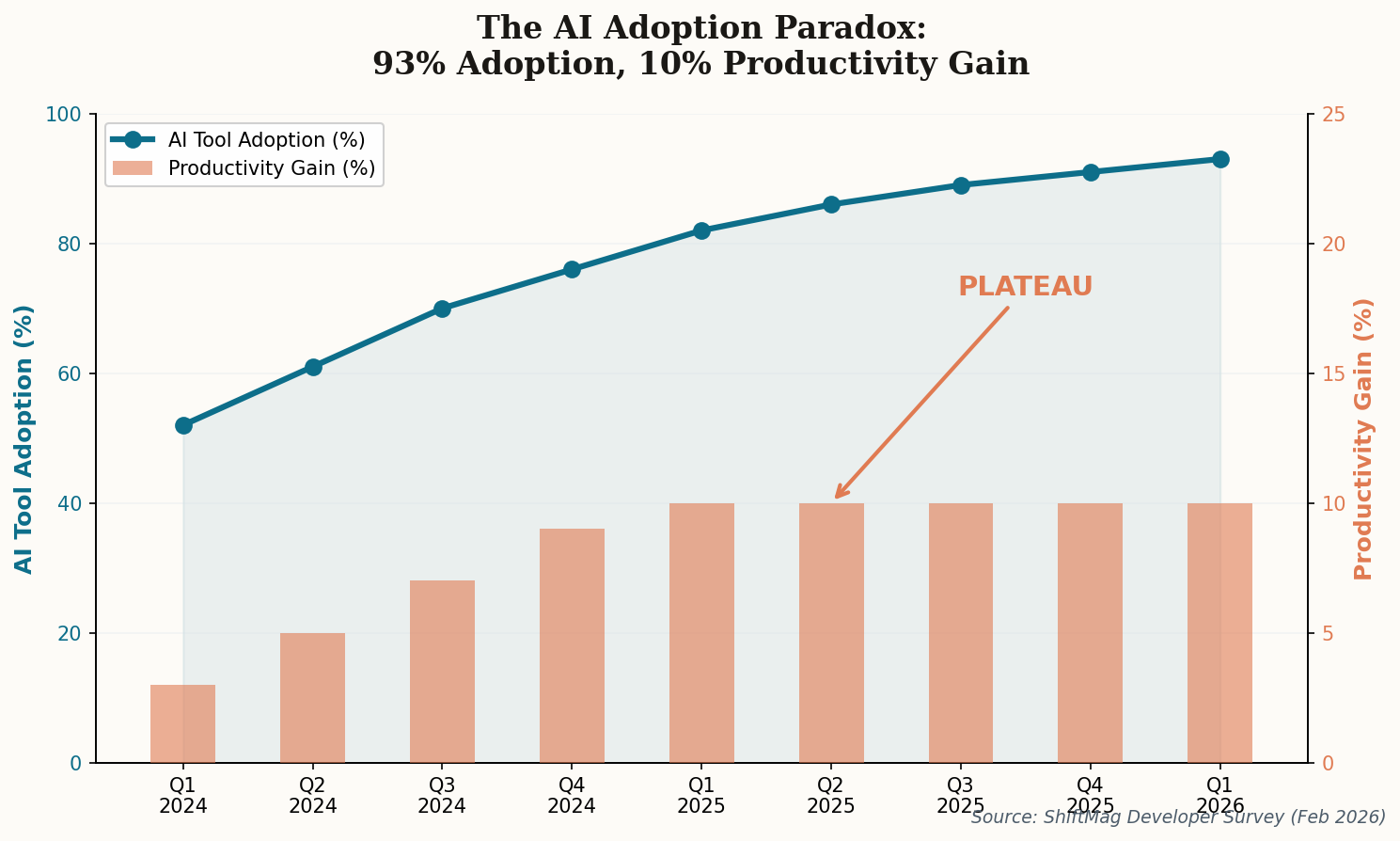

This isn't paranoia. CodeRabbit's analysis this week found that AI-authored code carries 2.74x more security issues than human-written code. When a quarter of your production codebase is machine-generated (and climbing), that multiplier starts to look existential.

The signal: Security review is no longer a phase at the end of the pipeline. It's becoming an always-on layer that runs continuously alongside code generation—because the generation never stops.