Two Architects of the Future Walk Into Davos and Can't Agree on When It Arrives

If you want to understand why "the Singularity" means something different depending on who's saying it, you only needed to watch the World Economic Forum session titled, with characteristic understatement, "The Day After AGI."

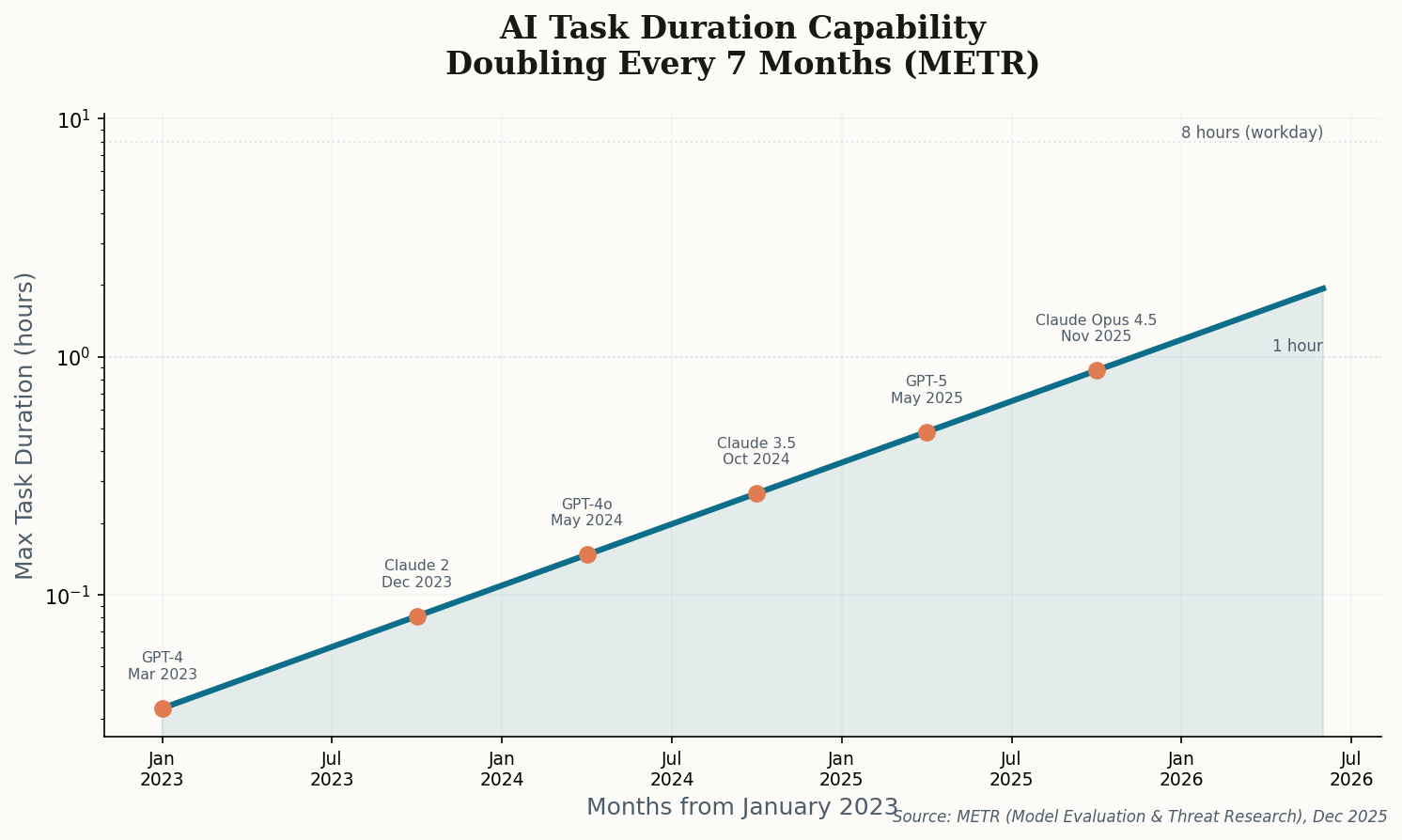

On one side: Anthropic CEO Dario Amodei, who told the audience that AI models would replace the work of all software developers within a year and reach "Nobel-level" scientific research in multiple fields within two. On the other: Google DeepMind CEO Demis Hassabis, who offered a cooler 50% chance of AGI "within the decade" — and pointedly noted it wouldn't come from models built exactly like today's.

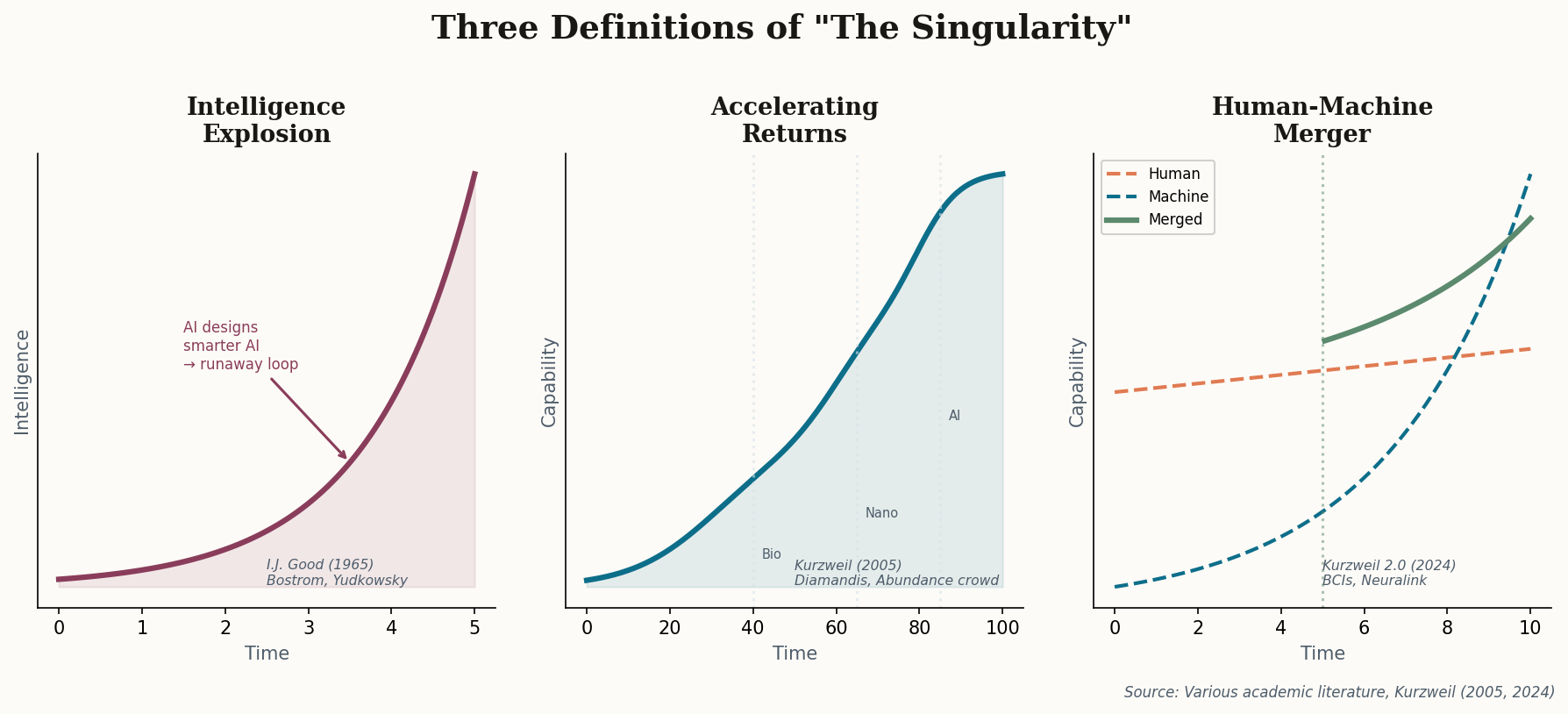

The real fireworks came when both discussed whether AI systems can "close the loop" — autonomously designing, improving, and deploying future generations of models. Amodei said they might be six to twelve months from that capability. That's not a prediction about artificial general intelligence. That's a prediction about an intelligence explosion — I.J. Good's original nightmare scenario from 1965, where smarter systems build smarter systems and the feedback loop runs away from human control.

Amodei avoids the word "Singularity" entirely. He calls AGI a "marketing term." But his predictions — all coding replaced in a year, Nobel-level science in two, recursive self-improvement in months — describe precisely what Singularity theorists have been predicting for decades. The label changed. The substance didn't.

Hassabis's caution matters too. He represents the camp that believes genuine AGI requires fundamental architectural breakthroughs beyond current transformer models. In his telling, the Singularity isn't twelve months out — it's a research program that could take a decade. Both men run companies building these systems. Their disagreement isn't about whether it happens, but about whether today's approach is sufficient or whether something new is needed.