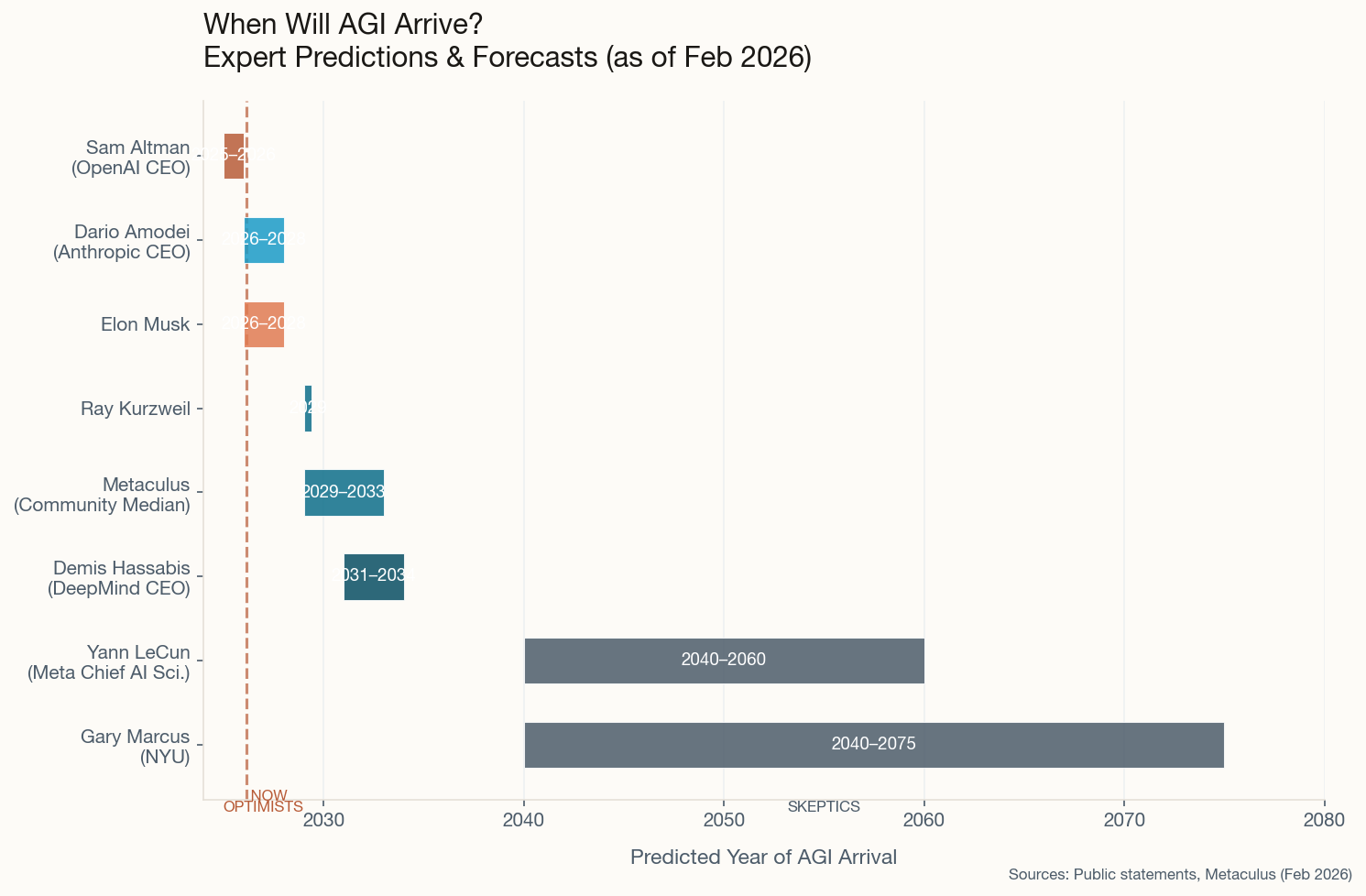

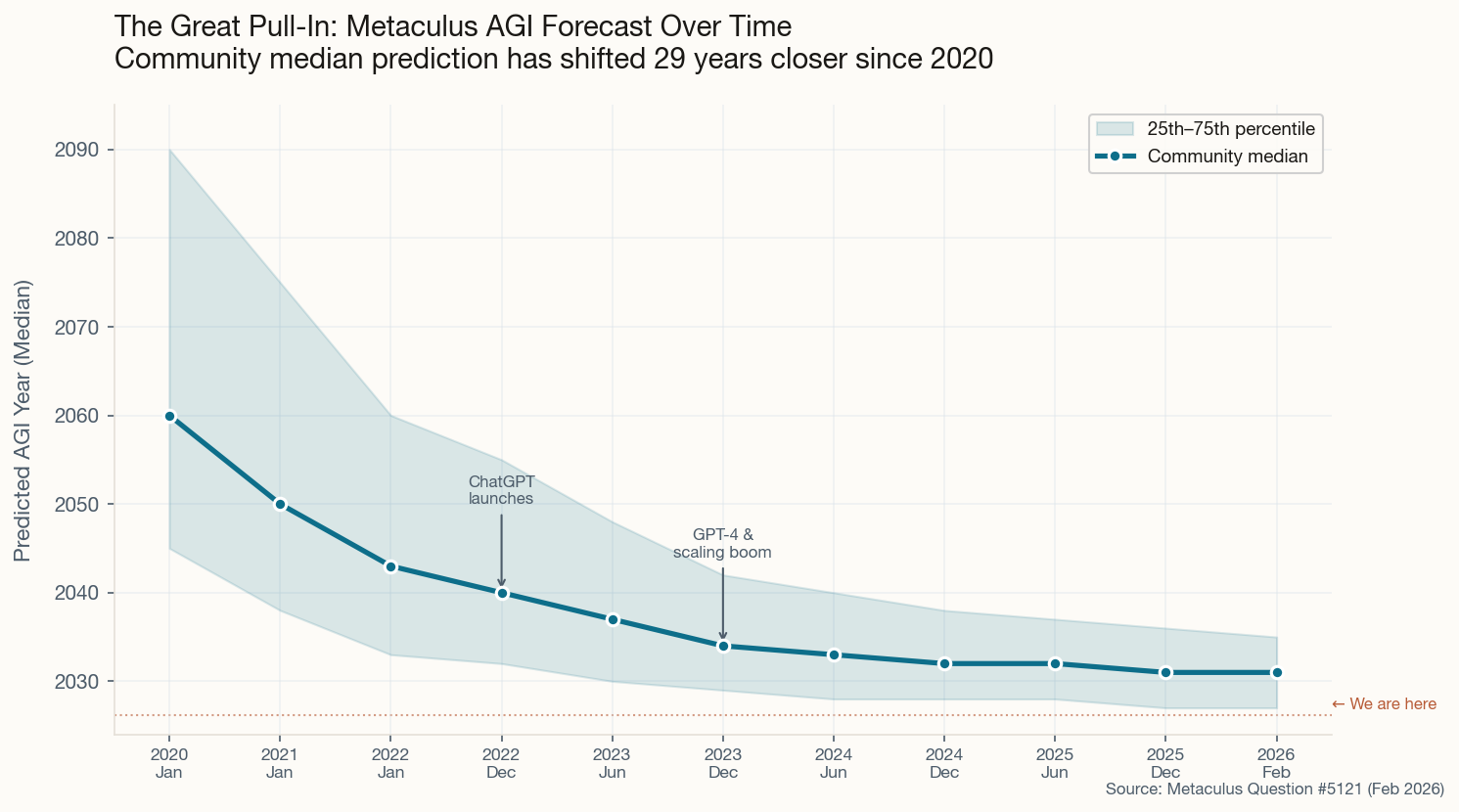

The Crowd Says 2031. The Crowd Has Been Wrong Before.

Here's the thing about crowdsourced intelligence: it's excellent at integrating public information and terrible at pricing genuine surprises. Metaculus—the prediction platform beloved by rationalists and AI researchers alike—updated its flagship AGI question this week, and the community median sits at 2031. That's a gentle pull-in from the 2032–33 range that held through most of last year, almost certainly a reaction to the barrage of capable model releases hitting in February.

The more interesting signal is in the tails. The 25th percentile has crept down to 2027—meaning one in four forecasters thinks we're less than eighteen months away. The "weakly general AI" question (a lower bar that requires broad competence without human-level everything) hovers around that same 2027 mark at the median. Meanwhile, the right tail still stretches comfortably past 2035 for the skeptics who think the current paradigm needs a fundamental rethink.

What should actually make you sit up: since 2020, the median has moved 29 years closer. That's not a gradual refinement—it's the forecast equivalent of the ground accelerating beneath your feet. Whether that means we're actually approaching AGI or just collectively hallucinating progress is, of course, the trillion-dollar question.