The Paranoid Enterprise Is Your Best Customer

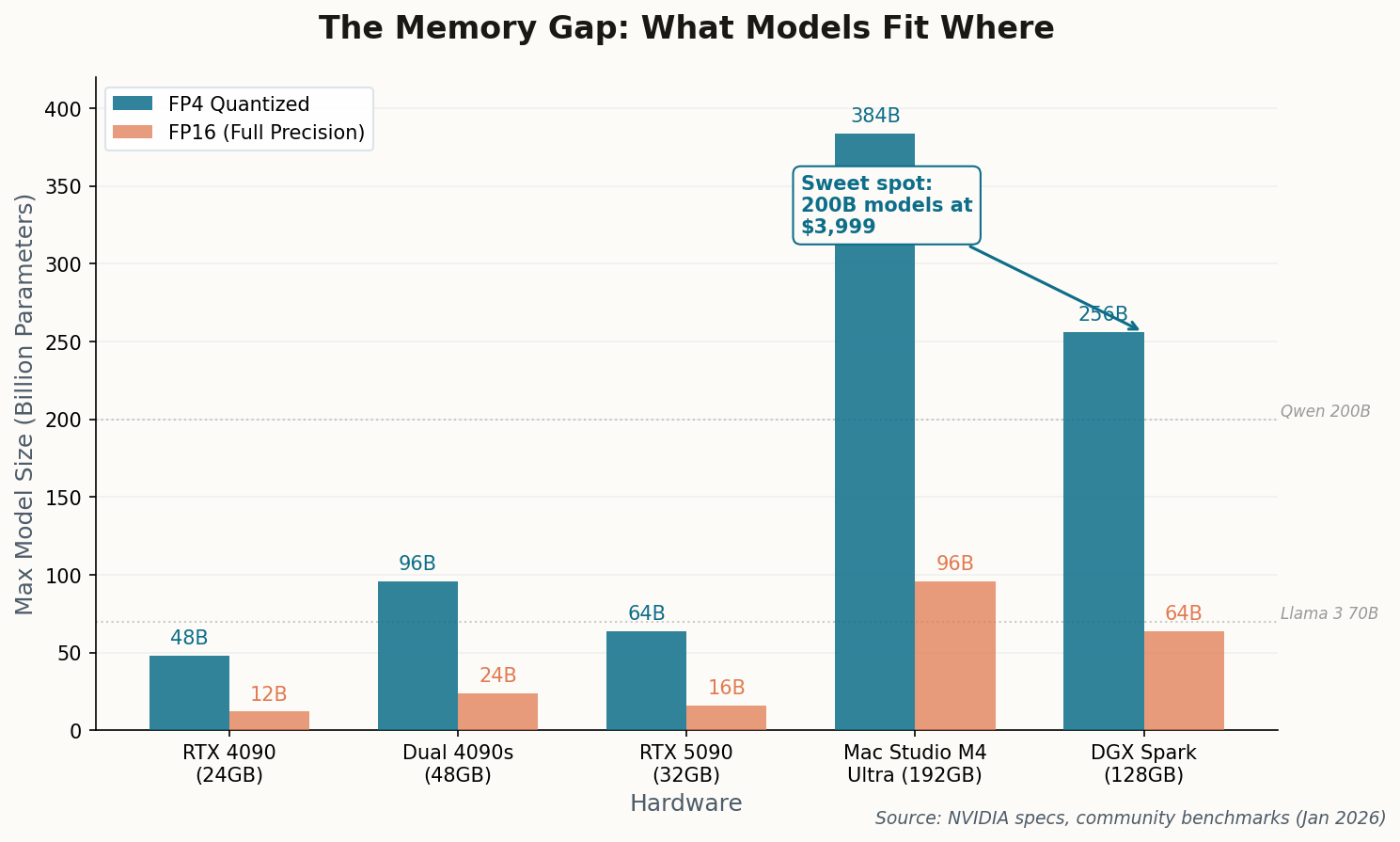

Here's a business model that practically sells itself: walk into a law firm or medical clinic and ask one question. "Where does your data go when you use ChatGPT?" Watch the color drain from their face. Then hand them a box the size of a Mac Mini that runs Llama 3 70B entirely on-premise, with 128GB of unified memory that can swallow a full case file without a single byte leaving the building.

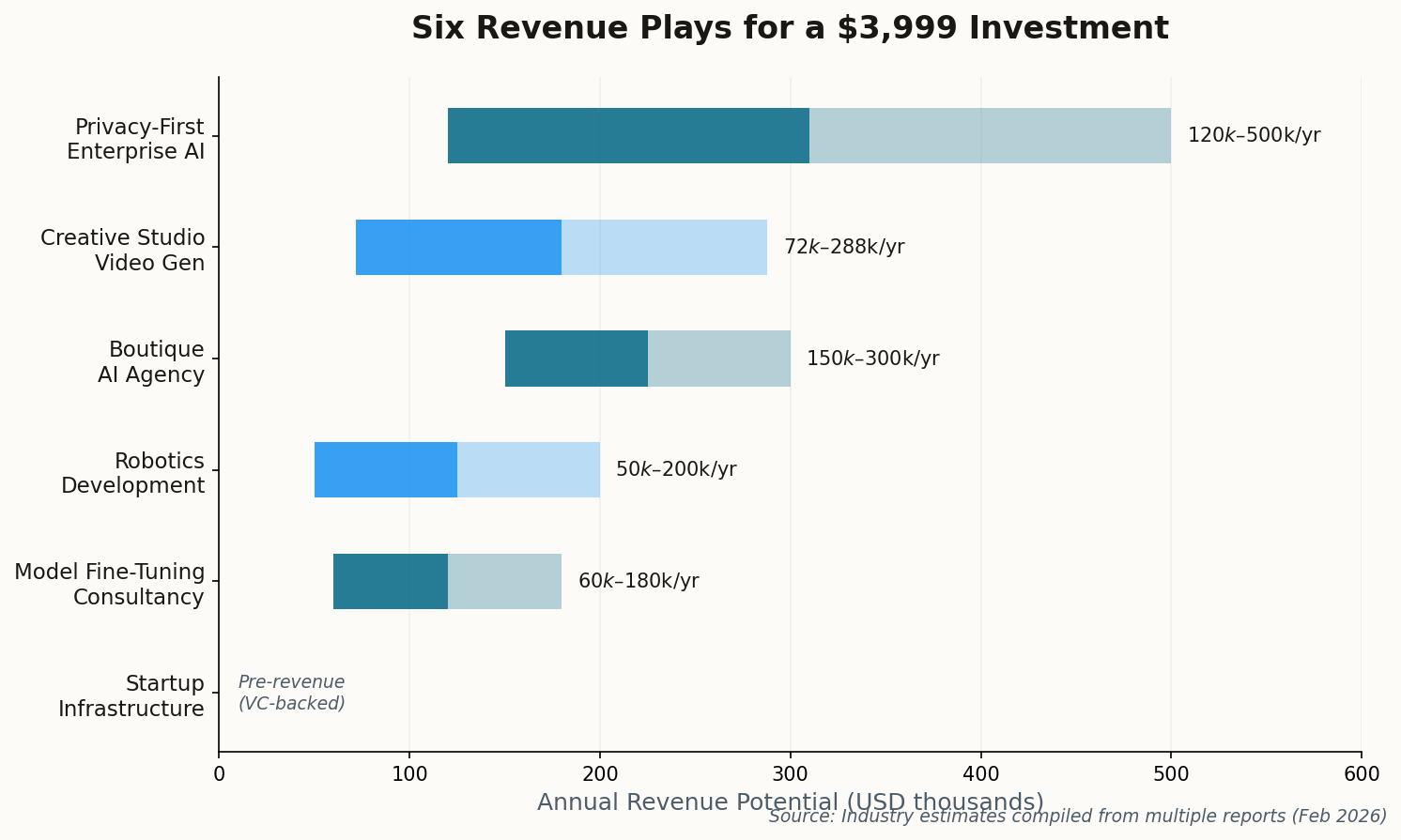

DreamFactory is already doing exactly this, bundling DGX Spark units with their API-as-a-Service platform to convert legacy enterprise databases into secure RAG pipelines. Their target? Healthcare and finance—sectors where a HIPAA violation or SEC audit makes cloud AI a non-starter. Enterprise contracts are ranging from $25,000 to $100,000 per year per deployment.

The real revenue isn't in selling the hardware. It's in the consultancy layer on top: configuring retrieval-augmented generation for specific document types, fine-tuning models on proprietary legal precedent or medical literature, and charging $200–$500 per hour for what amounts to "AI paralegal" work. The 128GB memory advantage matters here because legal briefs and medical imaging datasets are enormous—consumer GPUs with 24GB choke on them.

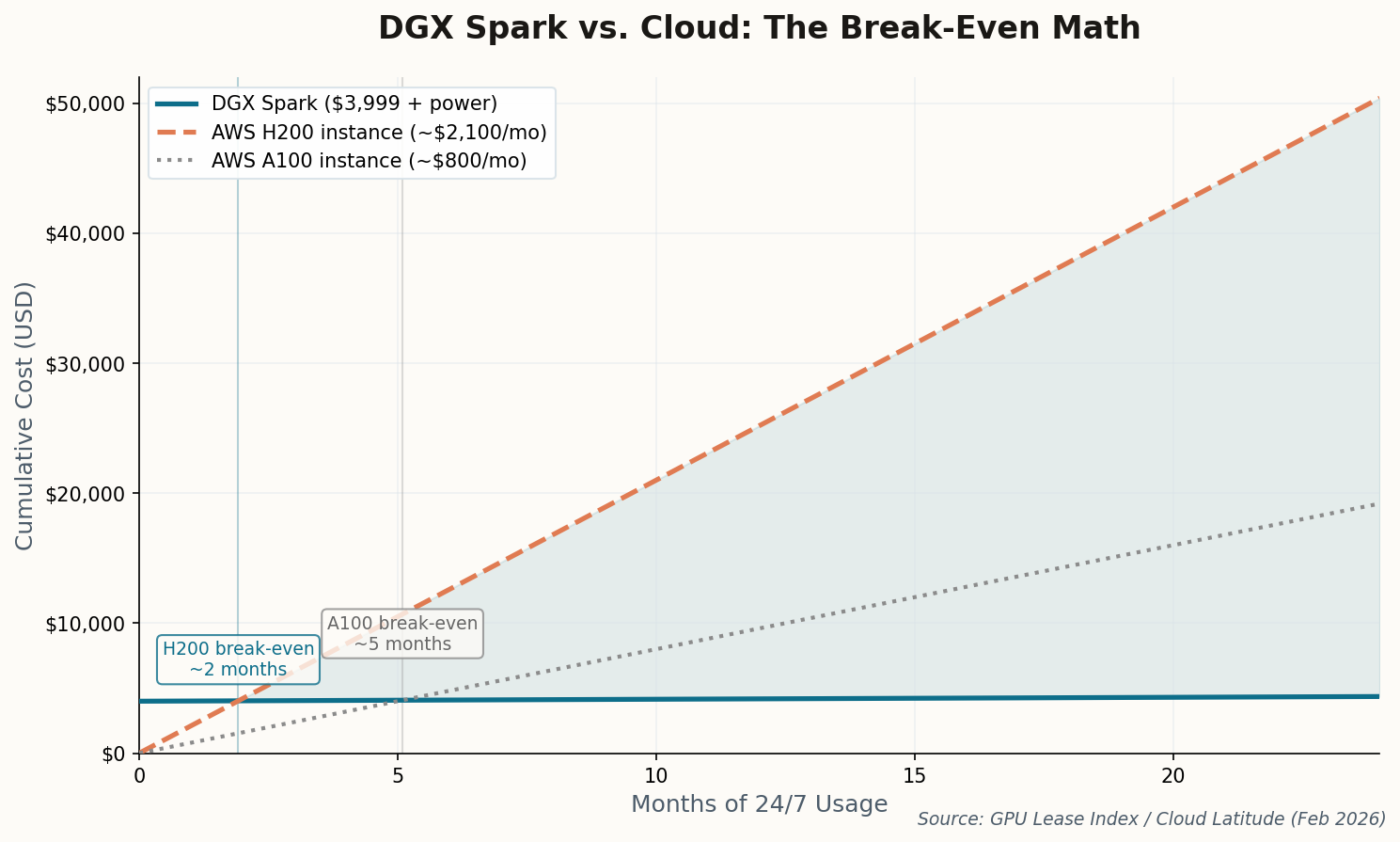

The play: Build a consultancy that deploys DGX Spark units as "private AI infrastructure" for regulated industries. Charge setup fees ($10k+), monthly maintenance, and per-project fine-tuning. Your cost basis is one $3,999 box per client.