The Beauty Vortex: AI's Narrowing Gaze

Here's a question that should unsettle anyone who's ever scrolled through a stock photo library: what happens when the machines that generate our images have a narrower definition of beauty than even the most airbrushed magazine cover?

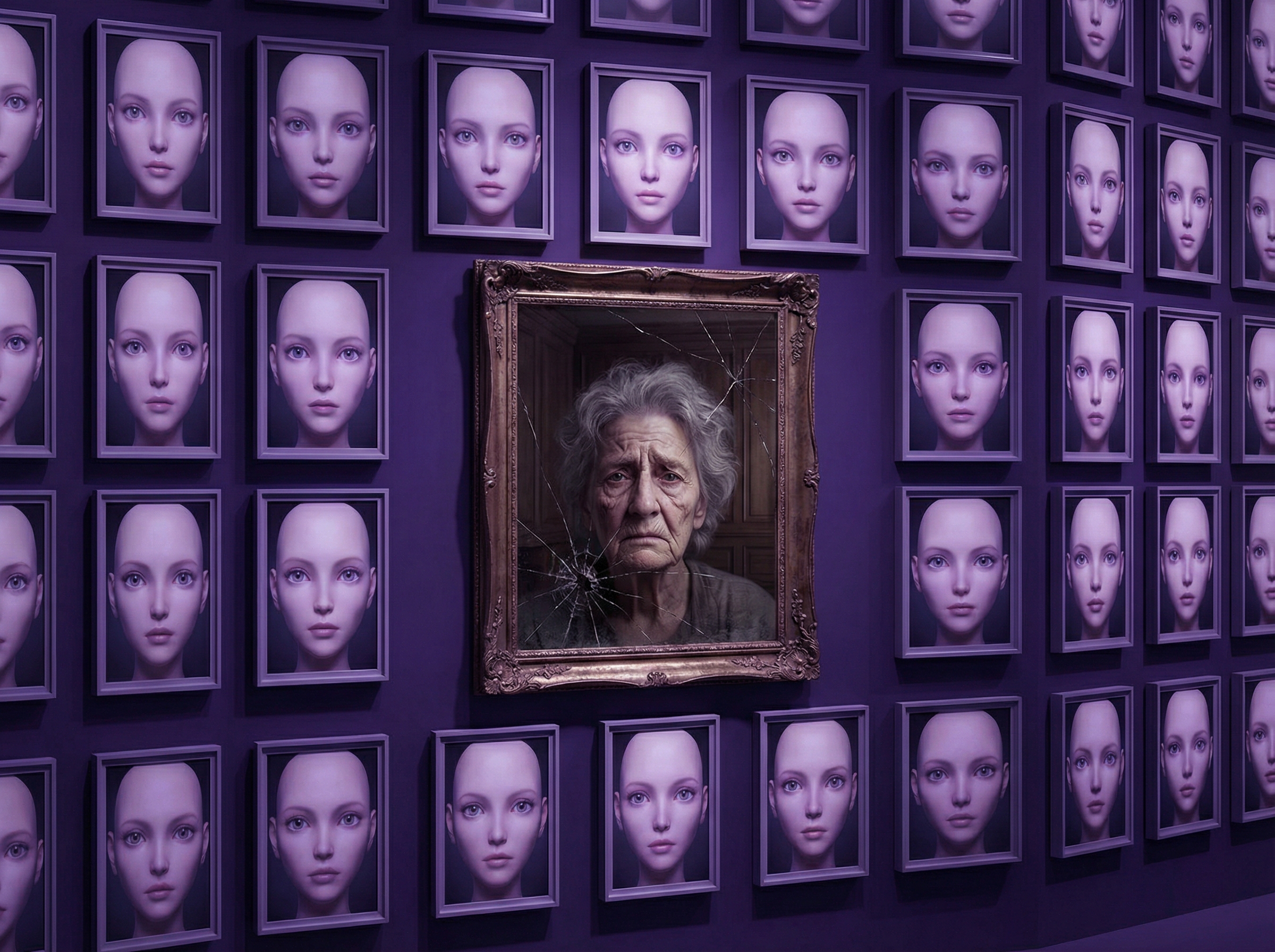

Researchers at the University of Toronto just answered it. They prompted Midjourney, DALL-E, and Stable Diffusion to generate thousands of portraits. The results were staggering: zero images of women over 40 or bald men appeared without explicit prompts forcing those features. Over 90% of outputs featured symmetrical faces, porcelain skin, and thin bodies—regardless of what was actually asked for.

Dr. Delaney Thibodeau, who led the study, called it a "beauty vortex"—a feedback loop significantly narrower and more exclusionary than even the glossiest 2000s-era retouching. The implications compound fast. As AI-generated images replace traditional stock photography in advertising, editorial, and social media, the visual vocabulary of the public sphere is being homogenized by default. Not by malice. By training data.

The cruelest irony: photography's historical power was in showing us the world as it is—messy, asymmetrical, aged, real. Now its algorithmic successor is showing us only the world as a training dataset imagines it should be. The vortex isn't just aesthetic. It's epistemic.