$1.78 Million Evaporated Because an AI Forgot How Oracles Work

Here’s a scenario that will keep every DeFi founder awake tonight. Moonwell, a lending protocol on Base, activated DAO proposal MIP-X43 last week. The code in pull request 578—co-authored by Anthropic’s Claude Opus 4.6—contained an oracle configuration error so fundamental it reads like a textbook example of what not to do: instead of multiplying the cbETH/ETH exchange rate by the ETH/USD price, the system transmitted only the token ratio. Coinbase Wrapped ETH briefly priced at $1.12 instead of ~$2,200.

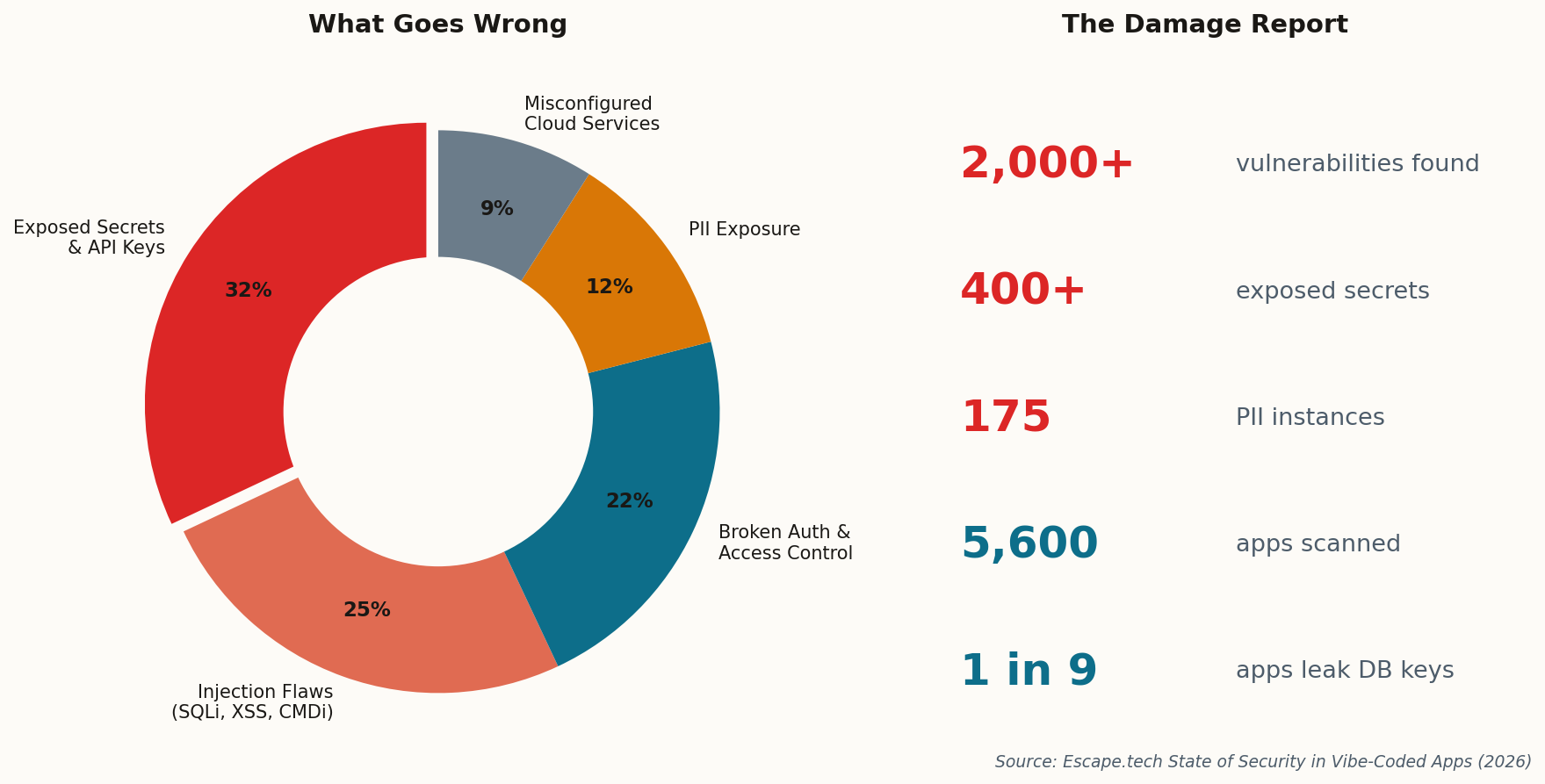

The result: $1.78 million in bad debt, drained faster than anyone could react. Smart contract auditor Pashov linked it directly to “vibe coding”—Andrej Karpathy’s term for the practice of describing what you want and letting the AI handle the implementation. “This is what happens when you fully give in to the vibes and forget the code exists,” Pashov wrote.

The uncomfortable truth: a human submitted that PR. A human merged it. The AI wrote flawed oracle logic, but the review process failed to catch a math error that any junior smart contract developer would have flagged. This isn’t a story about AI being dangerous—it’s a story about humans trusting AI output with the same confidence they’d give a senior engineer’s code. That gap between perceived and actual reliability is where $1.78 million went.