The Company That Wants to Fire You (Politely)

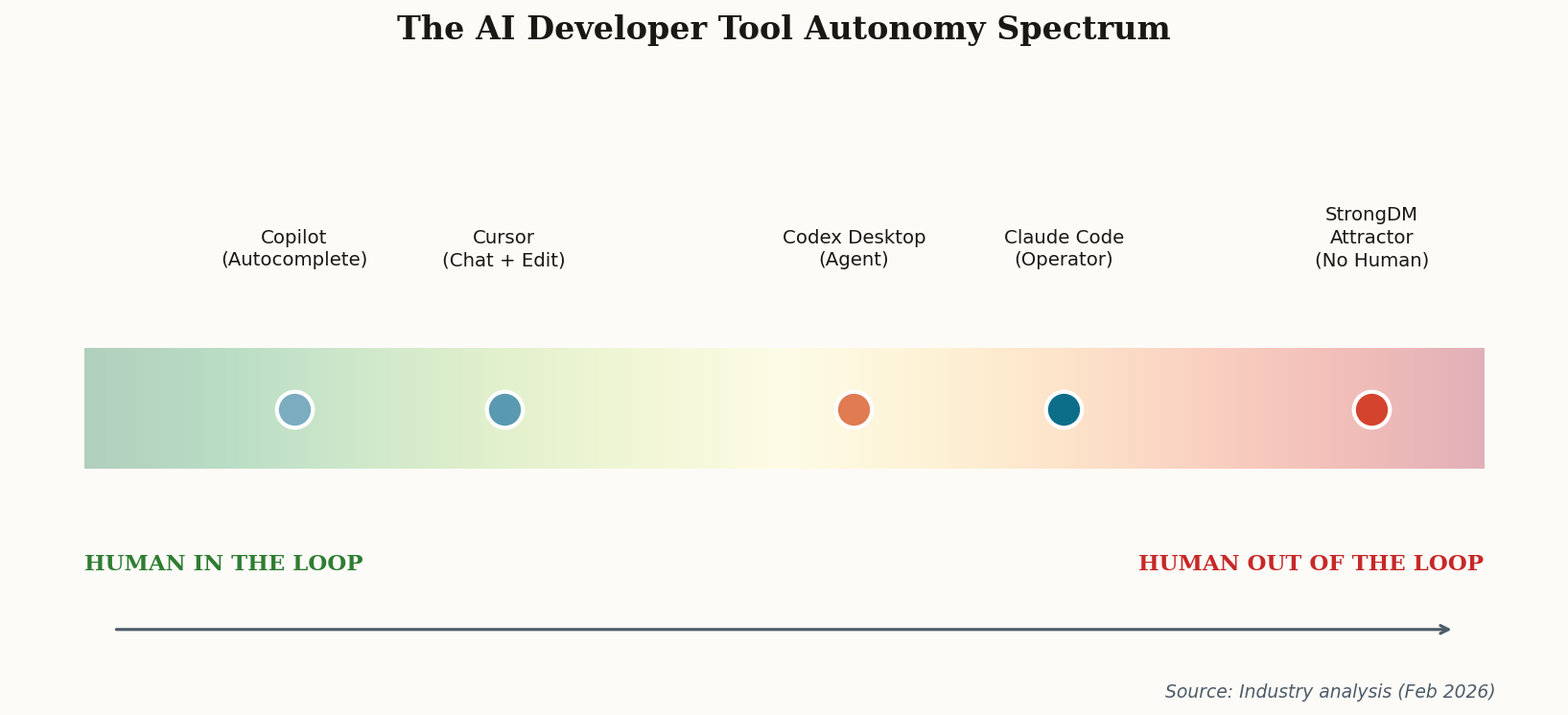

StrongDM unveiled something this week that made a lot of engineers choke on their cold brew: a "Software Factory" platform with a coding agent called Attractor designed explicitly for "non-interactive" code generation. Translation: no human review required.

Let that sit for a moment. We've spent the last three years collectively agreeing that AI coding tools are "copilots"—helpful assistants that augment human judgment. StrongDM just skipped that entire metaphor and went straight to "factory." The platform includes CXDB, an "AI Context Store" that creates immutable audit logs of every tool output and agent decision, which is their answer to the obvious question: if no human reviews the code, who's accountable when it breaks?

As Simon Willison noted in his characteristically measured analysis, the "human-out-of-the-loop" philosophy isn't just a product positioning choice—it's a bet on where enterprise software maintenance is heading. StrongDM isn't targeting greenfield development or creative system design. They're targeting the 80% of engineering work that's migrations, dependency updates, and boilerplate. The boring stuff. The stuff that, if we're honest, most engineers don't love doing anyway.

The uncomfortable question: what happens to the junior engineers who learn by doing that boring stuff?